SpaceX Moves to Manufacture GPUs for AI Infrastructure

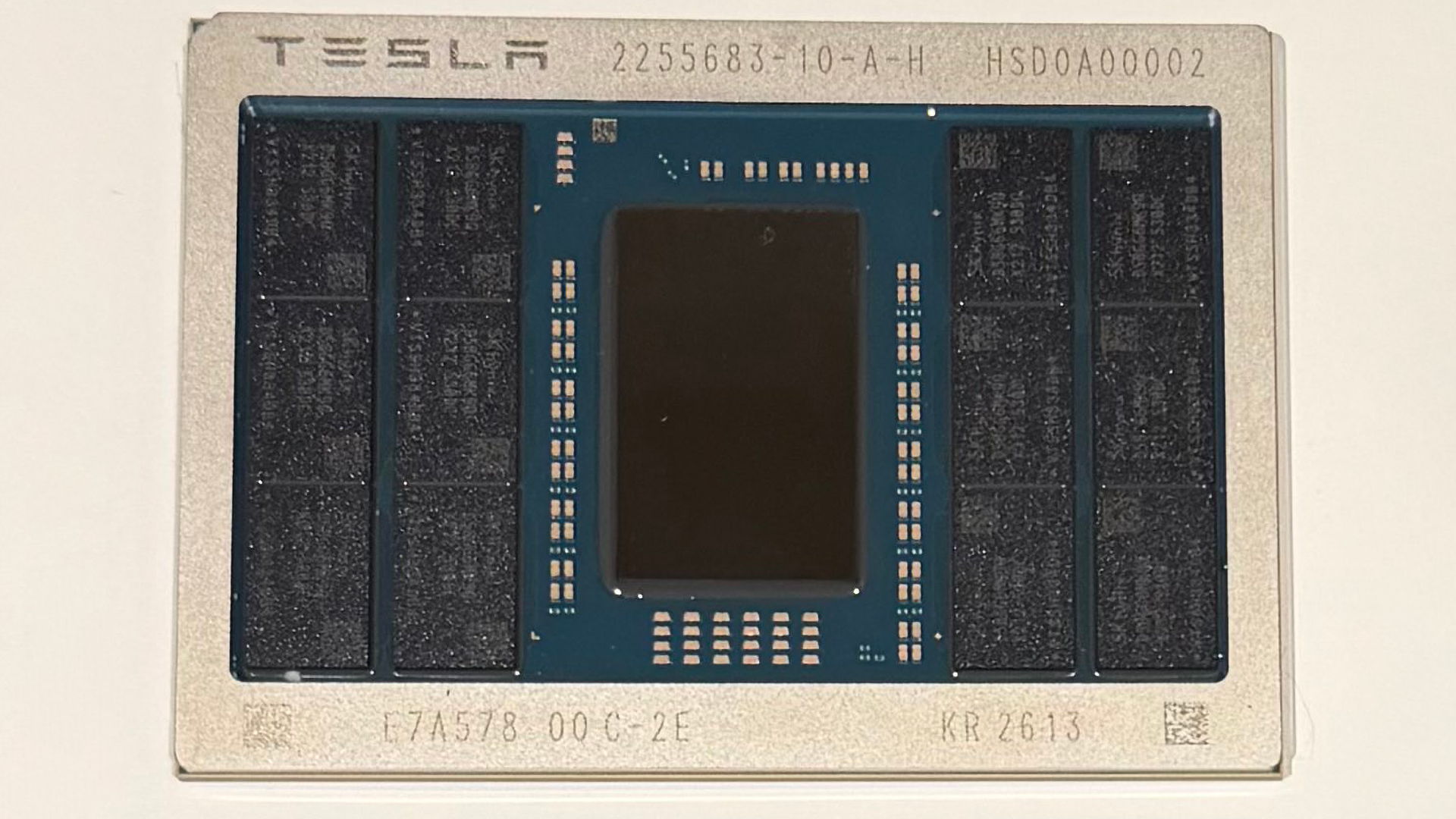

SpaceX plans to build and operate in-house GPU production as part of its Terafab initiative ahead of a planned $1.75 trillion IPO. The company disclosed in its S-1 filing that it intends to invest in semiconductor manufacturing to reduce dependence on external suppliers, explicitly listing "manufacturing our own GPUs" among future capital expenditures. The effort is linked to joint work with Tesla and xAI on the Terafab complex in Austin, Texas, and comments from Elon Musk referenced Intel's 14A node. The announcement signals deep vertical integration but leaves timelines, process enablers, and production scope unclear, making this a high-impact but risky strategic pivot for AI compute supply chains.

What happened

SpaceX disclosed in its S-1 registration ahead of a planned $1.75 trillion IPO that it will invest in building semiconductor manufacturing capacity, specifically naming "manufacturing our own GPUs" as a driver of substantial capital expenditures. The plan is tied to the Terafab project being developed with Tesla and xAI, and Elon Musk indicated a manufacturing role and referenced Intel's 14A process as a potential future node as the project scales.

Technical details

SpaceX's move targets general-purpose GPU class silicon rather than narrowly defined ASICs. GPU manufacturing at modern nodes involves fabs, extreme ultraviolet lithography (EUV), advanced packaging for HBM stacks, and mature yield engineering. Key technical challenges include:

- •advanced process technology and access to a foundry ecosystem (tooling, process kits, IP)

- •yield ramp and test flows for large die GPUs at high wafer costs

- •advanced packaging and memory integration such as HBM and interposers

- •securing supply of specialty inputs, from photomasks to substrate and test equipment

Why this matters to practitioners

Most AI training and inference infrastructure currently relies on third-party foundries and vendors like Nvidia, TSMC, and Intel. Vertical integration by a major systems company could reshape procurement dynamics, create alternative supply paths, and influence pricing and capacity for AI accelerators. If SpaceX actually fields high-volume, production-grade GPUs, cloud providers, AI labs, and OEMs will need to evaluate performance, software compatibility, and ecosystem support.

Business and partnership context

The Terafab complex in Austin is positioned to serve chips for automotive, robotics, and space-based data centers developed across Musk's companies. Reported ambiguity remains about who will run the fabrication technologies inside the plant and whether SpaceX intends pure foundry operations or a captive supply for affiliated entities. Intel has been mentioned as a potential partner and Musk referenced Intel's 14A node.

Risks and implementation realism

Building a wafer fab and GPU product line is capital intensive and operationally complex. The S-1 explicitly warns investors about high capex and lack of long-term supplier contracts. Risks include protracted ramp times, yield instability, enormous upfront tooling costs, and the need for a full software and driver stack to compete with incumbent GPU ecosystems. Market acceptance would also require performance parity, software ecosystem support (CUDA/driver compatibility or alternate stacks), and reliable supply of advanced memory.

Implications for AI practitioners

Short term, this is strategic signaling and a potential supply-chain diversification play. Medium term, if SpaceX scales production and opens capacity beyond internal use, expect pressure on pricing and sourcing strategies, potential new GPU variants optimized for robotics or space constraints, and renewed attention to software portability. Developers should watch for architecture disclosures, instruction sets, and driver/SDK availability that determine real-world usability.

What to watch

Monitor detailed disclosures in the filed S-1, concrete capital expenditure timelines, announced process partners, and any early silicon or software stacks. The gap between ambition and executing a competitive GPU product remains large, so practical impact will hinge on demonstrable production ramps and ecosystem support.

Scoring Rationale

The disclosure combines a major corporate finance event, a planned **$1.75 trillion IPO**, with a potentially disruptive move into GPU fabrication. This could materially affect AI compute supply chains, but timelines and execution risk remain high, so the story is important but not yet industry-shaking.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems