Quantization Reveals Outliers Impacting LLM Accuracy

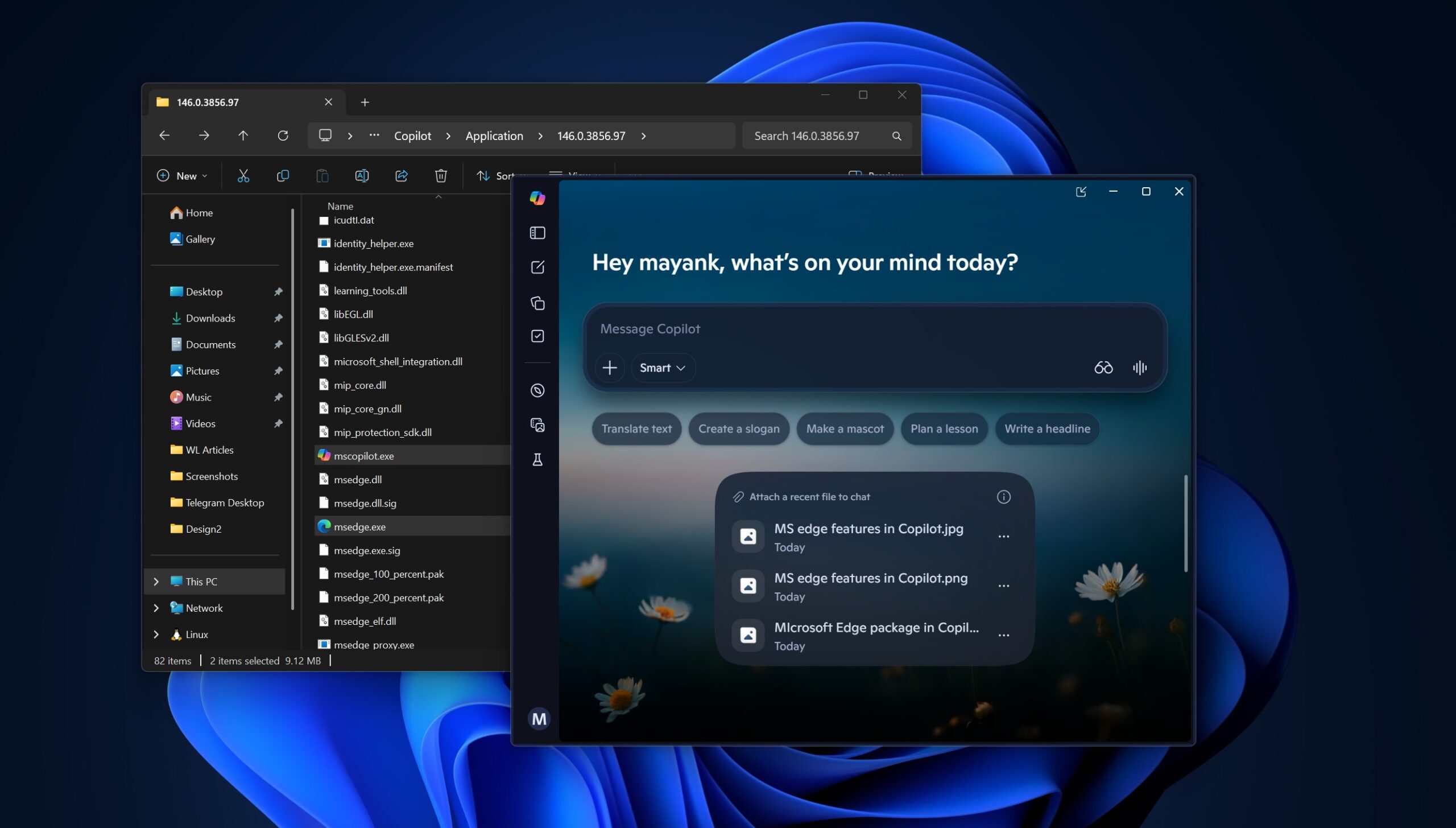

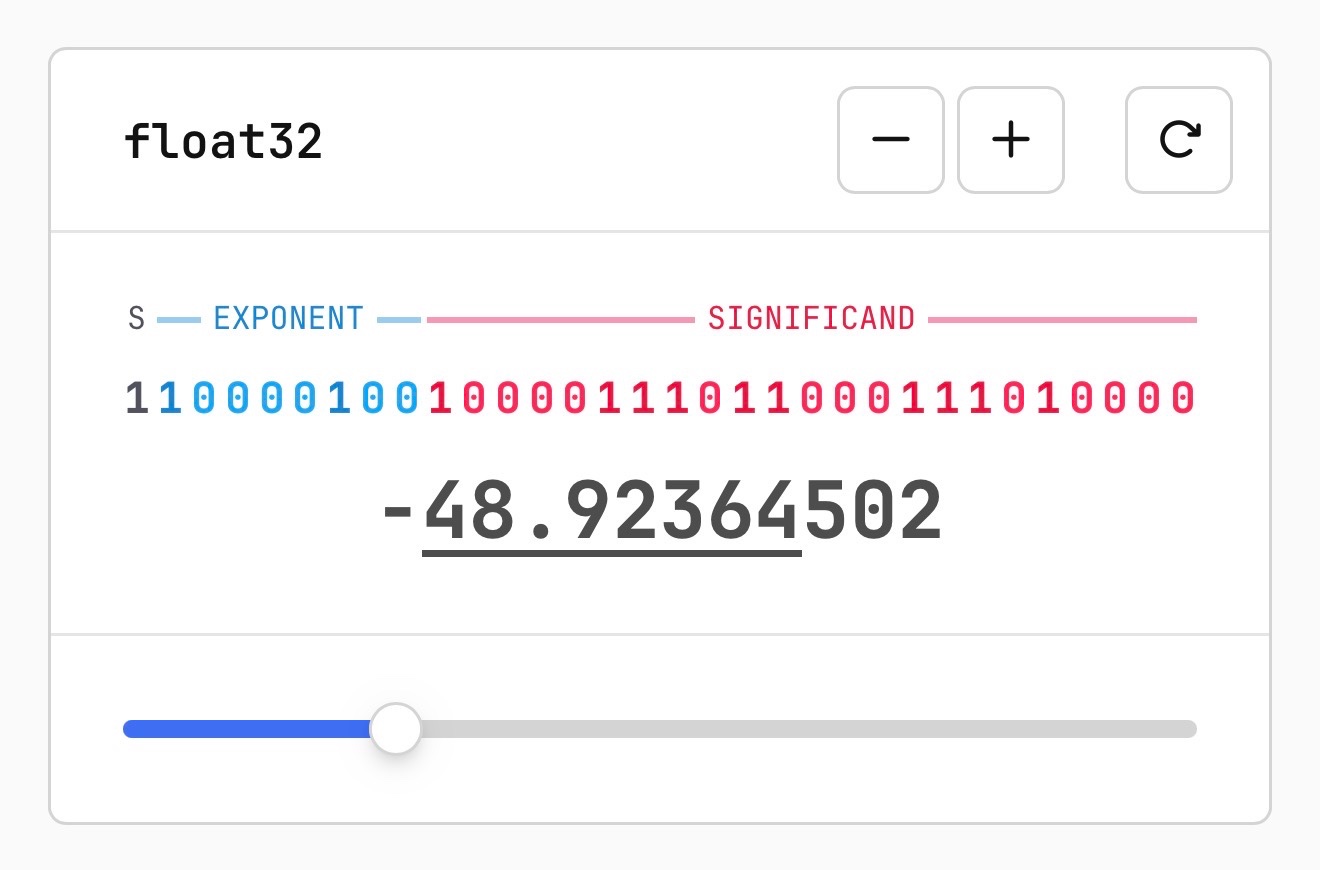

On March 26, 2026, Sam Rose published an interactive essay explaining how quantization of large language models works, including a visual explanation of floating-point representation and the role of rare 'outlier' weights. He documents strategies to preserve these outliers (e.g., leave unquantized or store separately) and benchmarks Qwen 3.5 9B with llama.cpp and GPQA, finding 16→8-bit retains near-original quality while 4-bit yields about 90%.

Scoring Rationale

Practical, industry-wide benchmarks and novel outlier emphasis justify score; limited by single-author blog and non-peer-reviewed format.

Practice with real Logistics & Shipping data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Logistics & Shipping problems