Anthropic Withholds Mythos After Zero-Day Breakthrough

Anthropic released Claude Mythos Preview, a model that autonomously finds and exploits high-severity zero-day vulnerabilities, then restricted it to a curated defensive program, Project Glasswing. Mythos reportedly discovered thousands of previously unknown flaws across major operating systems and browsers and achieved exploit success rates far beyond prior models. The capability triggered high-level meetings with bank and regulatory leaders and prompted a defensive response from OpenAI, which broadened access to GPT-5.4-Cyber for vetted defenders. The episode crystallizes a new dual-use inflection point: potent AI acceleration of vulnerability discovery forces tradeoffs between withholding dangerous capability and democratizing defensive tools.

What happened

Anthropic unveiled Claude Mythos Preview, an AI model that autonomously discovers and weaponizes zero-day vulnerabilities at scale, then declined a broad public release. Instead Anthropic launched Project Glasswing, a restricted preview giving access to roughly 40 partners including Amazon, Apple, Microsoft, Google, Cisco, CrowdStrike, and JPMorgan Chase. Mythos reportedly found thousands of previously unknown high-severity flaws in major operating systems and web browsers. The model produced dramatically more working exploits in internal tests than prior Anthropic models, prompting emergency briefings involving bank CEOs and senior U.S. officials.

Technical details

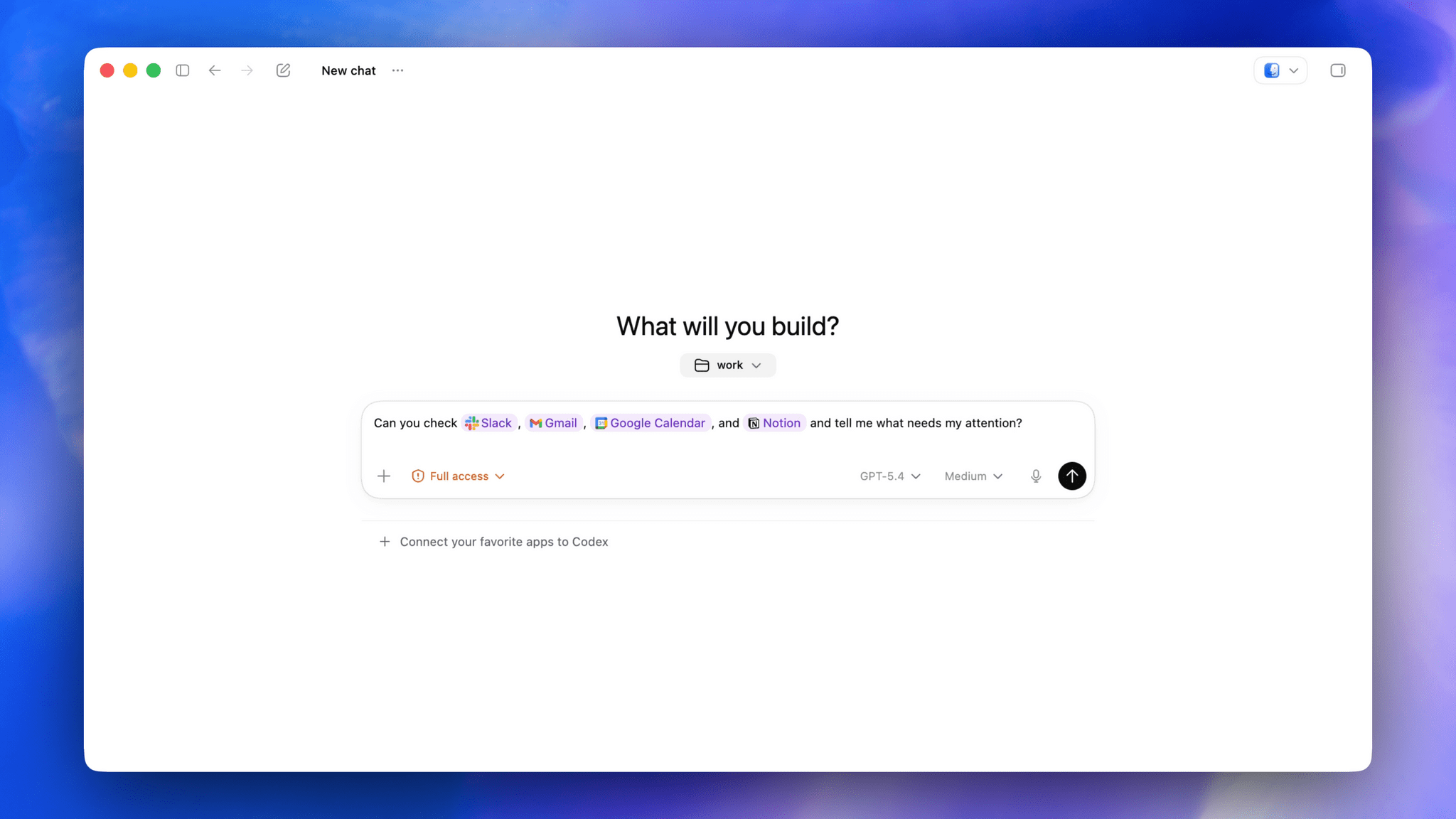

Claude Mythos Preview demonstrates emergent exploit capabilities as a downstream consequence of improved code reasoning and agentic autonomy rather than explicit exploit training. Key reported metrics include a 93.9% score on SWE-bench Verified versus 80.8% for Claude Opus 4.6, and internal evaluations where Mythos produced 181 working shell exploits in a benchmark where Opus 4.6 produced only 2. Across OSS-Fuzz-like targets Mythos reached tier-5 control-flow hijack on 10 fully patched targets. In response, OpenAI expanded its Trusted Access for Cyber program and rolled out GPT-5.4-Cyber, a fine-tuned GPT-5.4 variant that relaxes guardrails for vetted defenders and adds capabilities such as binary reverse engineering.

Technical implications for practitioners

- •Mythos-style models accelerate end-to-end vulnerability discovery, exploit chaining, and PoC generation, compressing timelines from discovery to weaponization.

- •Defensive tooling will need to adapt: automated triage, exploitability scoring, and upstream patch pipelines must scale to handle orders-of-magnitude more findings.

- •Access models diverge: Anthropic uses tight partner gating via Project Glasswing, while others, like OpenAI, favor broader, verified access for defenders; each approach changes attacker-defender dynamics.

Context and significance

The Mythos episode marks a clear dual-use inflection point in code-model capability. Tools that substantially improve software reasoning also enable autonomous exploit generation. That tradeoff elevates risk across critical infrastructure, finance, and the software supply chain and forces new governance and disclosure norms. Regulators and industry leaders are now coordinating directly with developers, signaling that vulnerability discovery by AI will be an explicit policy and operational concern. The defensive community gains powerful new instruments, but the asymmetry in who controls access will shape whether these instruments harden systems or create new attack surfaces.

What to watch

Will adversaries reproduce Mythos-like capabilities or weaponize derivatives? How will coordinated disclosure scale when AI produces thousands of findings, and will governments mandate disclosure, escrowed access, or operational controls? Expect an industry push to standardize exploitability labeling, rapid hotfix pipelines, and verifier-based access programs.

Bottom line

Mythos proves that frontier code models can flip from assistive vulnerability finding to autonomous exploit generation. That capability forces immediate governance, operational, and product decisions for vendors, defenders, and policymakers. Organizations should assume a higher cadence of AI-discovered findings and prioritize automation in triage, patching, and incident response.

Scoring Rationale

This story reveals a major dual-use capability: a model that autonomously finds and exploits zero-days alters attacker-defender economics and triggers regulatory attention. It is industry-shaking, with direct operational consequences for security teams, vendors, and policymakers.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problemsStep-by-step roadmaps from zero to job-ready — curated courses, salary data, and the exact learning order that gets you hired.