Uber Re-architects Data Lake Ingestion Platform

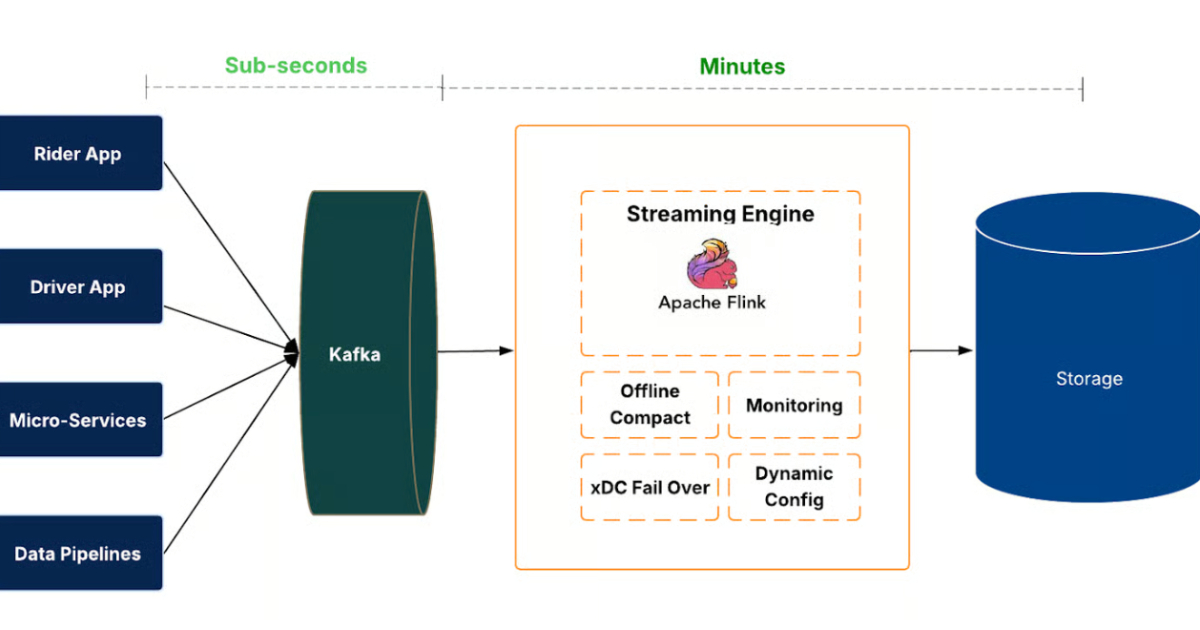

Uber engineers re-architected the company's data lake ingestion platform, replacing scheduled Spark batch jobs with a streaming-first system named IngestionNext. The new pipeline uses Kafka, Flink, and Apache Hudi to reduce ingestion latency from hours to minutes, support thousands of datasets, and cut compute usage by roughly 25%. A control plane, compaction strategies, and failover mechanisms maintain correctness and availability.

Scoring Rationale

Strong enterprise engineering with measurable latency and cost benefits; limited novelty beyond established streaming-first patterns.

Practice with real Streaming & Media data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Streaming & Media problems