Skymizer Announces HTX301 Single-Card 700B Inference

Per Skymizer's announcement and a PR Newswire release, Taiwanese startup Skymizer unveiled the HTX301 inference chip and the HyperThought platform, claiming a single PCIe card (six HTX301 chips, 384 GB memory) can run 700B-parameter LLM inference at about 240W. Independent tech outlets including TechRadar and Wccftech report the design uses older 28 nm process nodes and standard LPDDR4/LPDDR5 memory rather than HBM/GDDR, and note claimed compression and performance metrics (for example, 30 tokens/sec at 0.5 TOPS and KV/weight compression gains reported versus llama.cpp). Reporting frames the announcement as a challenge to GPU-based on-prem inference stacks. Editorial analysis: the claims are technically provocative but currently rely on vendor-provided figures and pre-release materials.

What happened

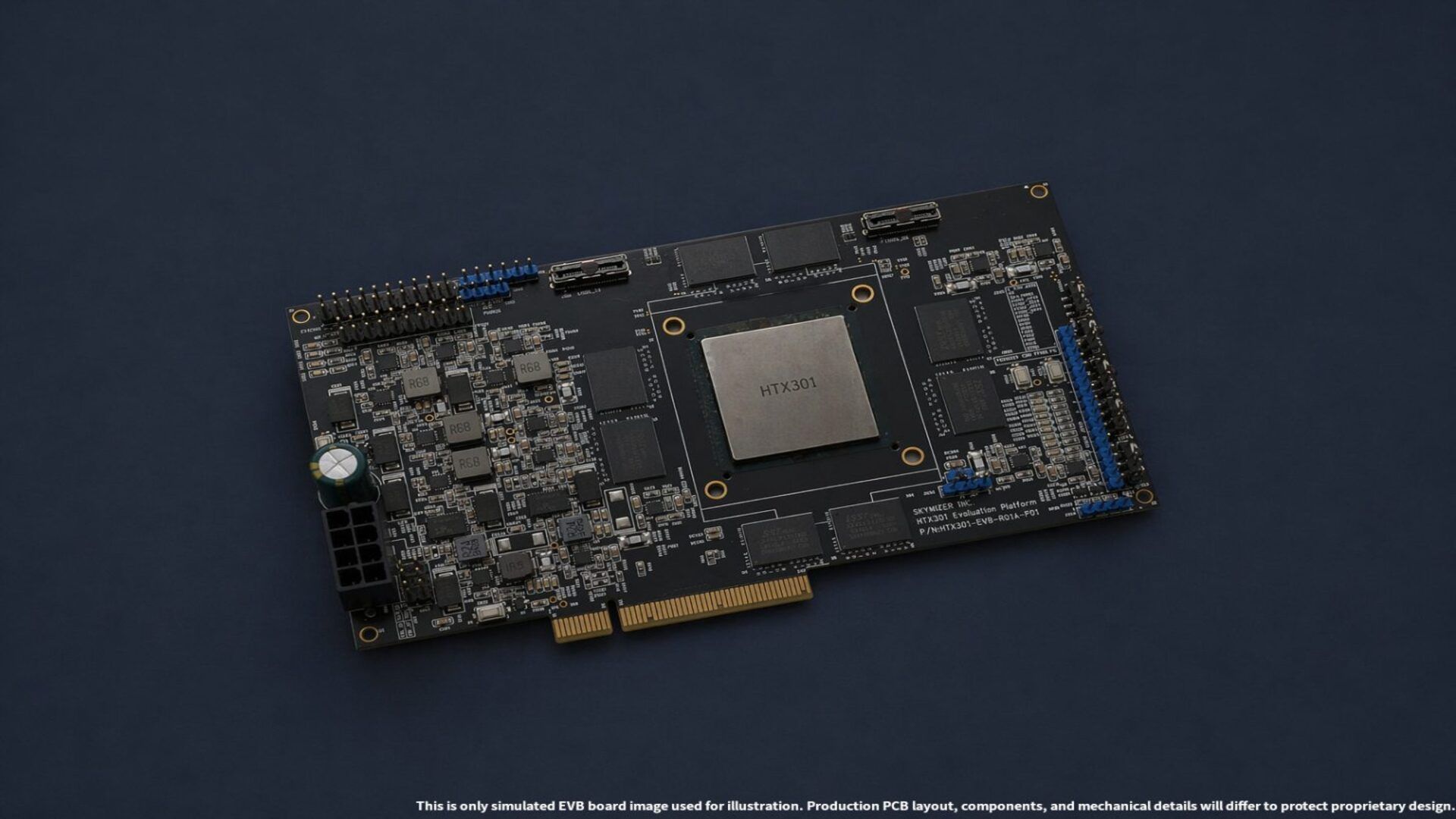

Per Skymizer's announcement and a PR Newswire release, Skymizer unveiled the HTX301 inference chip and the HyperThought hardware/software platform, presented as a reference design for on-prem inference. The company and the PR state a single PCIe card populated with six HTX301 chips and 384 GB of memory can execute 700B-parameter model inference at roughly 240W per card (PR Newswire; Skymizer announcement). Press coverage from TechRadar and Wccftech reports additional vendor claims about processor node and memory choices, specifically use of 28 nm silicon and LPDDR4/LPDDR5 rather than HBM or GDDR (TechRadar; Wccftech).

Technical details

Per Skymizer and reporting, the HyperThought architecture emphasises a decode-first approach and disaggregates prefill and decode workloads, pairing purpose-built LPU IP with a software orchestration layer. Skymizer's materials claim compression techniques for model weights and KV cache that outperform open-source baselines, with quoted ranges such as 9% to 17.8% weight-compression improvement versus llama.cpp and KV-cache perplexity loss in the low-percentage range (Wccftech; Skymizer announcement). Tech outlets cite vendor-published microbenchmarks including figures like 30 tokens/second at 0.5 TOPS and bandwidth claims around 100 GB/s (TechRadar; Wccftech). These numerical claims in the announcement and press coverage are vendor-sourced or drawn from early reporting; independent third-party benchmarks are not present in the scraped coverage.

Context and significance

Editorial analysis: public reporting frames the HTX301 announcement as an attempt to reduce reliance on multi-GPU clusters, NVLink/NVSwitch interconnects, and high-bandwidth memories for very large LLM inference. Companies promoting decode-optimised silicon and compressed KV/weight representations argue such designs can lower end-to-end power and infrastructure cost for certain inference workloads. Industry context: similar trade-offs have appeared in prior accelerator projects that trade peak TOPS for efficiency via compression, reduced-precision math, and workload-specific pipelines. The claim set here combines lower process-node silicon with heavy compression and orchestration, a combination that, if validated, would change some deployment economics for on-prem enterprise inference but would require independent validation on realistic workloads and model families.

Limits of the current record

What is reported is primarily company-provided specification and selected microbenchmarks, amplified by tech press coverage (PR Newswire; Skymizer site; TechRadar; Wccftech). Reporting does not include independent third-party benchmarks, long-duration reliability data, denoised latency distributions for large-vocabulary tasks, or details on software compatibility with major model runtimes beyond vendor descriptions. For high-stakes claims, single-card 700B inference at 240W and memory/compression trade-offs, the sources are explicit that these are vendor announcements (Skymizer announcement; PR Newswire). The announcement includes executive quotations such as, "Inference has become the dominant AI workload, and infrastructure needs to reflect that reality," attributed to William Wei, Chief Marketing Officer (Skymizer announcement; PR Newswire).

What to watch

Editorial analysis: observers should look for independent benchmarks from neutral labs or community projects comparing the HTX301 card to contemporary GPU accelerators on key axes: end-to-end inference latency, real-world throughput on common LLM families, memory-residency behaviour for KV cache, quantization and compression-induced accuracy regressions, and sustained power under production workloads. Additional signals include details on software ecosystem support (runtimes, frameworks, tokenizer compatibility), availability of hardware samples for partners, and vendor-provided reproducible benchmark artifacts. Media coverage and vendor materials to date focus on specifications and microbenchmarks; the transition to production adoption will require ecosystem integration and stability data (TechRadar; Wccftech; PR Newswire).

Overall, the announcement is notable for its combination of claims: single-card support for 700B-parameter models, use of older 28 nm process technology, and an emphasis on compression and decode-first orchestration. Those claims are currently vendor-originated and await independent verification before the broader AI infrastructure community can assess practical performance and cost trade-offs.

Scoring Rationale

The claim of single-card **700B** inference at **~240W** challenges prevailing GPU-centric on-prem deployments and is therefore notable for practitioners assessing infrastructure options. The story is primarily vendor-sourced and lacks independent benchmarks, so its practical impact remains to be validated.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems