OpenAI Adds WebSocket Mode to Responses API

OpenAI introduced a WebSocket-based execution mode for its Responses API, according to an OpenAI technical blog post (April 22, 2026). The persistent, bidirectional connection is intended to reduce network round-trips in multi-step agentic workflows; OpenAI reports end-to-end speedups of up to 40% and says the change helped users experience model throughput increases from 65 TPS to nearly 1,000 TPS when combined with model and infra updates (OpenAI blog). Industry coverage and developer docs cited by PANews, KuCoin, and Binance add that the WebSocket mode supports incremental input, is compatible with Zero Data Retention (ZDR) patterns, and currently limits single connections to 60 minutes.

What happened

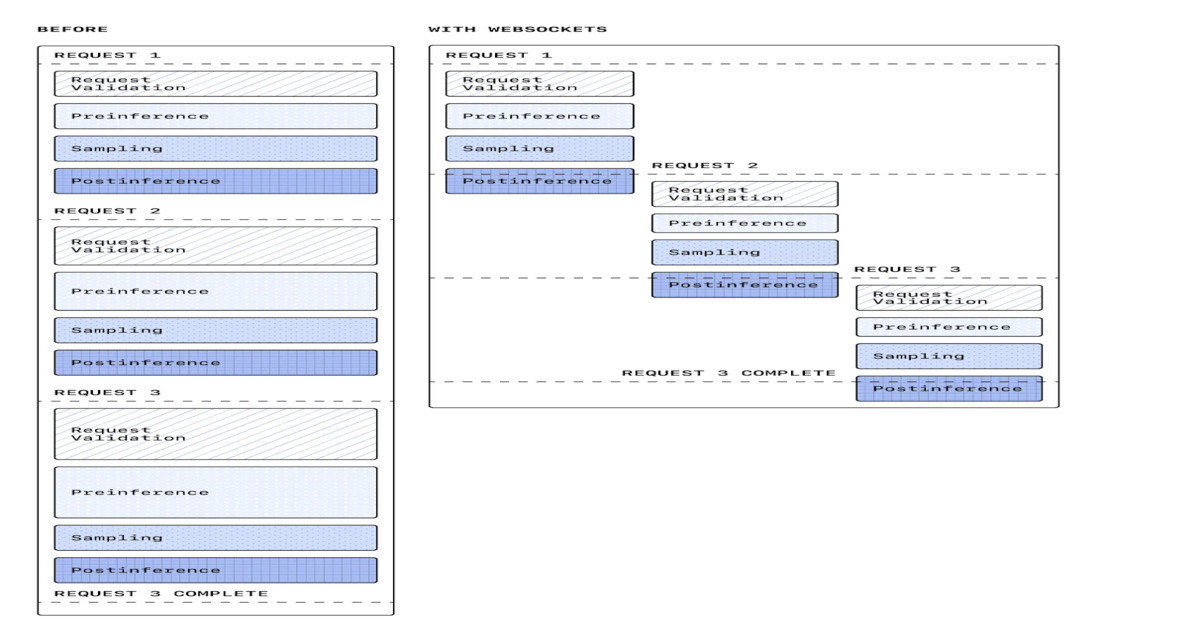

OpenAI introduced a WebSocket-based execution mode for the Responses API, according to an OpenAI technical blog post by Brian Yu and Ashwin Nathan (April 22, 2026). Per OpenAI, the new mode replaces repeated HTTP request-response cycles with a long-lived, bidirectional connection to reduce API-level overhead in multi-step agentic workflows. OpenAI reports an end-to-end latency improvement of up to 40% in early production use and describes enabling users to experience model throughput increases from 65 tokens per second to nearly 1,000 tokens per second when coupled with model and hardware improvements (OpenAI blog). InfoQ and OpenAI documentation also cite sustained throughput in some production tests of around 1,000 TPS, with bursts reported up to 4,000 TPS (InfoQ).

Technical details

Documentation and coverage describe that the WebSocket mode maintains server-side conversation state and uses concepts like items and response.create events, enabling incremental input and streaming outputs. The blog attributes much of the observed improvement to reducing repeated network handshakes, caching rendered tokens and model configuration in memory, and eliminating intermediate network hops. OpenAI links the transport change to a performance sprint that accompanied faster coding models such as GPT-5.3-Codex-Spark and specialized inference hardware (OpenAI blog).

Reported limits and compatibility

Reporting by PANews and related developer documentation summarized by KuCoin and Binance notes that the WebSocket mode supports incremental input, can work with Zero Data Retention (ZDR) approaches for low-latency context continuation via previous_response_id, and currently restricts single WebSocket connections to a 60-minute maximum (PANews / KuCoin / Binance summaries of developer docs).

Editorial analysis: latency and transport matter now

Industry context

As inference speeds rise, transport- and orchestration-level overhead becomes a larger fraction of end-to-end latency. Companies building agentic systems increasingly rely on event-driven, stateful protocols to avoid repeated cold-starts and round-trips. This pattern is visible across realtime voice, multimodal, and tool-enabled agents where per-step HTTP calls compound into perceptible latency.

Editorial analysis: implications for agent architectures

For practitioners: Moving from synchronous HTTP calls to a persistent wss:// connection simplifies incremental input, streaming, and tool-call coordination, reducing coordination overhead for long chains of tool calls. Developers integrating streaming audio, STT/TTS, or multi-tool orchestration will likely see the largest benefits because these workloads amplify per-hop costs.

Industry context: operational and safety trade-offs

Industry context

Persistent connections shift operational concerns from per-request scaling to connection lifecycle management, backpressure, and state recovery. Teams must consider how to checkpoint and resume conversations, enforce connection duration limits like the documented 60-minute cap, and reconcile persistent state with data-retention and privacy requirements. Reporting also highlights compatibility with ZDR patterns, which can ease compliance in privacy-sensitive deployments (PANews / KuCoin).

What to watch

For practitioners: Monitor official OpenAI developer documentation for SDK examples, connection lifecycle APIs (session.update, item, response.create), and explicit guidance on handling reconnection and previous_response_id-based context resumption. Observe latency and throughput numbers on real workloads, especially for chains with 20+ sequential tool calls where OpenAI and partners reported the largest relative gains. Also watch how cloud providers and open-source toolkits adopt similar persistent-connection patterns and whether platform clients provide robust reconnection and state-sync primitives.

Bottom line

OpenAI has pushed a transport-layer change that, according to its blog and corroborating coverage, materially reduces end-to-end latency for agentic workflows by enabling persistent, stateful connections and incremental input. Editorial analysis: This change aligns with broader industry movement toward event-driven, stateful APIs for real-time and tool-enabled agents, and it raises practical operational and privacy questions that engineering teams should validate against their production requirements.

Scoring Rationale

The update materially improves latency and throughput for agentic workflows, which matters to practitioners building multi-step tool chains and realtime apps. It is a notable platform change rather than a frontier-model breakthrough, so it scores as a major product-level development.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems