NVIDIA Adds Day-0 DeepSeek V4 Blackwell Support

Per DeepSeek and NVIDIA technical documentation, DeepSeek V4 ships with two models: DeepSeek-V4-Pro (1.6T total, 49B active parameters) and DeepSeek-V4-Flash (284B total, 13B active), both supporting 1,000,000-token context windows and up to 384K token outputs (NVIDIA developer blog; vLLM blog). NVIDIA showcases Day-0 support for the V4 family on Blackwell GPUs and reports preliminary throughput near 3,500 tokens per second per GPU on GB300/Blackwell Ultra in slide materials cited by Wccftech. The V4 family introduces a hybrid attention design and memory-compression techniques that NVIDIA attributes to large reductions in per-token FLOPs and KV cache memory; open-source runtime vLLM and other stacks report early support and ongoing optimizations (vLLM blog; vllm.ai).

What happened

Per the DeepSeek documentation and NVIDIA developer blog, DeepSeek V4 launches as a two-model family: DeepSeek-V4-Pro with 1.6T total parameters and 49B active parameters, and DeepSeek-V4-Flash with 284B total and 13B active parameters. Both models support up to 1,000,000-token context windows and advertise maximum outputs up to 384K tokens (NVIDIA developer blog; vLLM blog). NVIDIA published deployment notes showing Day-0 integration paths for the V4 family on Blackwell GPUs via NIM microservices and GPU-accelerated endpoints (NVIDIA technical blog). Independent coverage of NVIDIA slide decks reports preliminary per-GPU inference throughput near 3,500 tokens per second on GB300/Blackwell Ultra hardware (Wccftech).

Technical details

Per NVIDIA's technical blog and the vLLM implementation notes, DeepSeek V4 uses a hybrid attention architecture that combines compressed-sparse attention, a form of sparse attention, and heavier compression layers. NVIDIA documents a claimed 73% reduction in per-token inference FLOPs and a 90% reduction in KV cache memory burden relative to prior baselines, attributable to the attention and KV cache compression techniques (NVIDIA developer blog). The vLLM team reports an initial implementation path that leverages FP4/FP8 KV-cache dtypes, kernel fusion, and hybrid KV-cache designs for scaling to the 1M-token window while noting further optimizations remain in progress (vllm.ai blog).

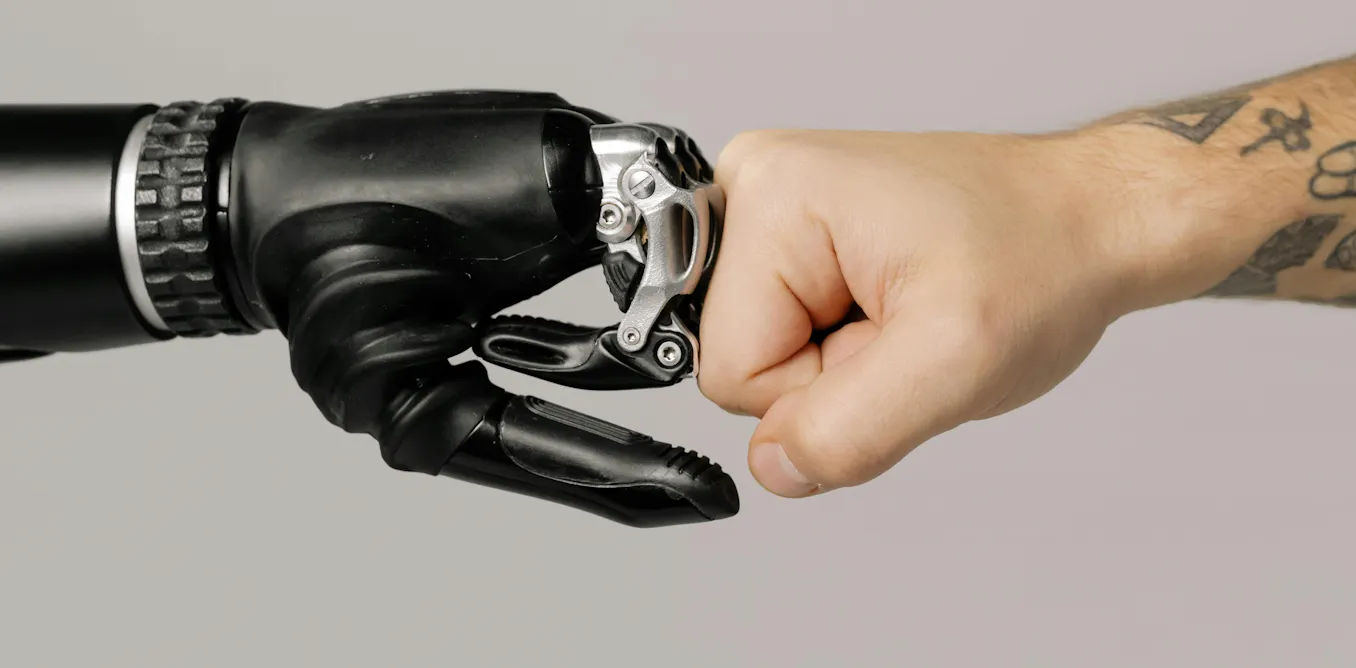

Industry context

Industry reporting frames the combination of a long-context model family and immediate Blackwell support as a concrete example of hardware and model co-design accelerating deployability for extreme-context inference. Observed patterns in similar releases show that runtime-level features such as NVFP4 indexing, tuned CUDA kernels, and prebuilt NIM recipes materially shorten time-to-deployment for large-context models across multi-GPU clusters (PauseHardware; Wccftech). For practitioners, the V4 family pushes the performance envelope for applications that require sustained, multi-hundred-thousand token state such as large-scale document search, long-form multi-step agents, and archival code reasoning.

What to watch

Industry watchers should monitor three things:

- •performance/stability reports from early adopters running multi-node Blackwell clusters with the Day-0 recipes

- •open-source runtime releases and benchmarks from vLLM and others as they add NVFP4/FP4 indexer and kernel fusion optimizations

- •real-world latency and cost-per-token metrics as NVFP4 and Blackwell kernel improvements settle into production (Wccftech; vLLM blog; PauseHardware)

Editorial analysis: The technical advances in attention compression and the immediate runtime plumbing for Blackwell hardware lower integration friction for one-million-token workflows. Companies and open-source runtimes that already prioritize precision-aware KV-cache formats and kernel-level fusion will see the fastest path to exploit V4 throughput gains. Observed patterns in comparable model launches indicate a staged optimization curve: initial functional support is followed by successive kernel and scheduler optimizations that drive the headline TPS and p95 latency improvements over weeks to months.

Scoring Rationale

This release pairs a long-context model family with immediate Blackwell GPU support, materially lowering deployment friction for extreme-context workloads. The story is notable for infrastructure and runtime engineering but not a paradigm-shifting model release.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems