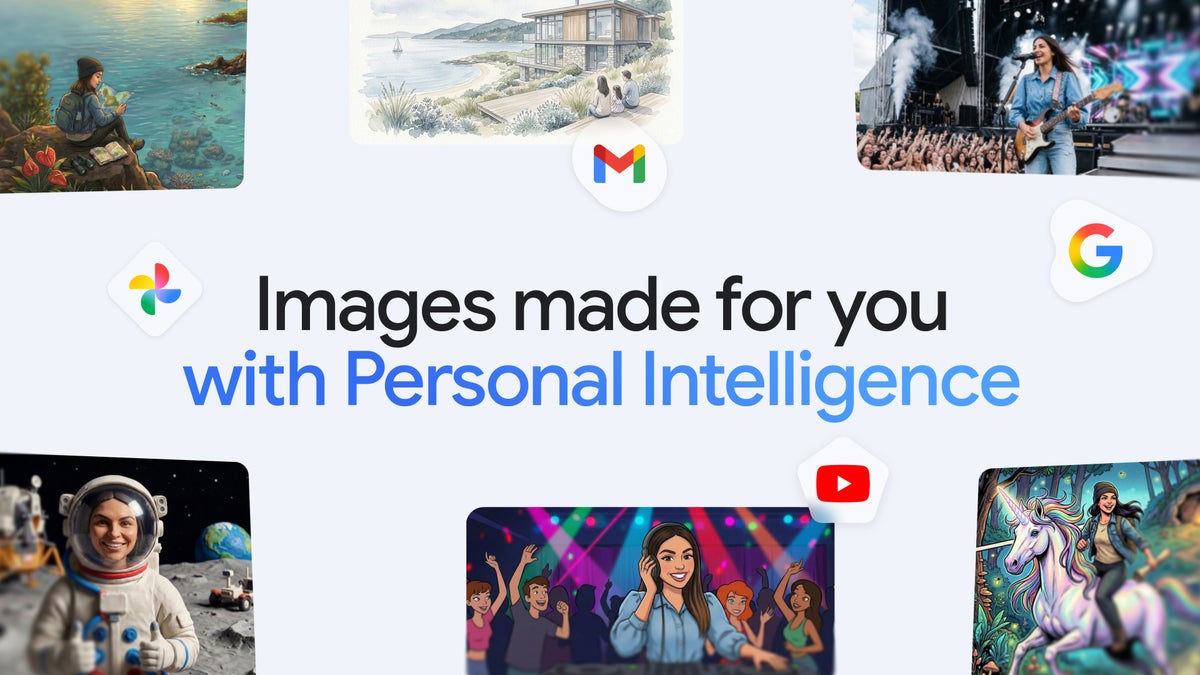

Gemini Uses Google Photos to Generate Personalized Art

Google updated the Gemini app to let Personal Intelligence pull images and preferences from Google Photos and other connected Google services to generate personalized artwork using the image model Nano Banana 2. Eligible U.S. subscribers to AI Plus, Pro, and Ultra will see the feature in the Gemini app in the next few days, with a Chrome rollout coming soon. The system uses Photo labels and account signals to select reference images automatically, offers a Sources view for transparency, and allows users to swap or refine reference photos. Google says it will not "directly train" core models on private photo libraries but may train on "limited info" such as prompts and responses. The change improves convenience and personalization but raises practical privacy and provenance questions for practitioners and product teams.

What happened

Google expanded the personalization capabilities of the Gemini app by connecting its Personal Intelligence feature to Google Photos, enabling the image model Nano Banana 2 to generate images that reflect your personal tastes, people, and objects without manual uploads or long prompts. The update is rolling out to U.S. subscribers of AI Plus, Pro, and Ultra in the Gemini app over the next few days, with a broader Chrome-based release planned soon. A company spokesperson, Elijah Lawal, confirmed that labels in Google Photos help identify people and reference photos for generation.

Technical details

The end-to-end flow uses account-linked signals, search, YouTube, Gmail metadata, and now Google Photos labels and images, to construct context for image generation. Nano Banana 2 is the image model producing the outputs; Gemini automatically selects a reference image from your library when you request something personal, for example "Create a hand-drawn illustration of mom" or "Design my desert island essentials." If the result is off, users can refine with follow-up prompts or explicitly choose a different reference image via a "+" control. Google exposes a "Sources" view so users can inspect which images were referenced and ask for attributions.

- •Automatic reference selection from Google Photos using user-applied labels and metadata

- •Generation using the image model Nano Banana 2 integrated into the Gemini app

- •User controls to swap reference photos, refine prompts, and view referenced sources

- •Opt-in Personal Intelligence scope limited to eligible paid subscribers

Privacy and training mechanics

Google states it will not "directly train" its core models on a user's private Google Photos library, but it does collect "limited info" such as specific prompts and the model's responses to improve features. That distinction matters for practitioners assessing data governance: images referenced at inference remain part of a user's private store, but derivative signals may be used for product improvement. The product also surfaces the sources it used, which helps traceability but does not necessarily prevent downstream model-level learnings from prompt-response telemetry.

Context and significance

This update leverages Google's unique access to cross-product user signals to reduce prompt engineering friction and create more contextual, user-specific generations. For product teams and ML engineers, the release exemplifies a broader trend: shifting complexity from user prompts into backend context fusion, where identity, preferences, and labeled content seed generation. That approach favors large platform players with entrenched data and account ecosystems. At the same time, it surfaces typical tensions between convenience and privacy, and between provenance and model improvement pipelines.

What to watch

Monitor how Google operationalizes the claim that it will not "directly train" on private photos, and whether regulators or privacy advocates press for stricter controls or clearer opt-outs. Practitioners should watch UX telemetry for failure modes where incorrect label-to-person matches produce misleading or harmful images, and evaluate guardrails for consent, attribution, and content safety.

Practical takeaways for practitioners

Product teams can borrow the pattern of backend context fusion to reduce user prompting, but must pair it with auditable data flows, robust identity-disambiguation, and explicit UI affordances for source selection and revocation. ML teams should treat user-provided reference images as sensitive training-adjacent assets and define clear retention and telemetry policies that align with legal and ethical obligations.

Scoring Rationale

The update materially improves user convenience and demonstrates a platform advantage by using cross-product signals, which is notable for product and ML teams. It is not a frontier model release, so its technical novelty and industry-wide impact are moderate. The score accounts for the product relevance and short-term rollout, minus a freshness adjustment.

Practice with real Ad Tech data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Ad Tech problems