Databricks Genie Improves Trust With Benchmarks

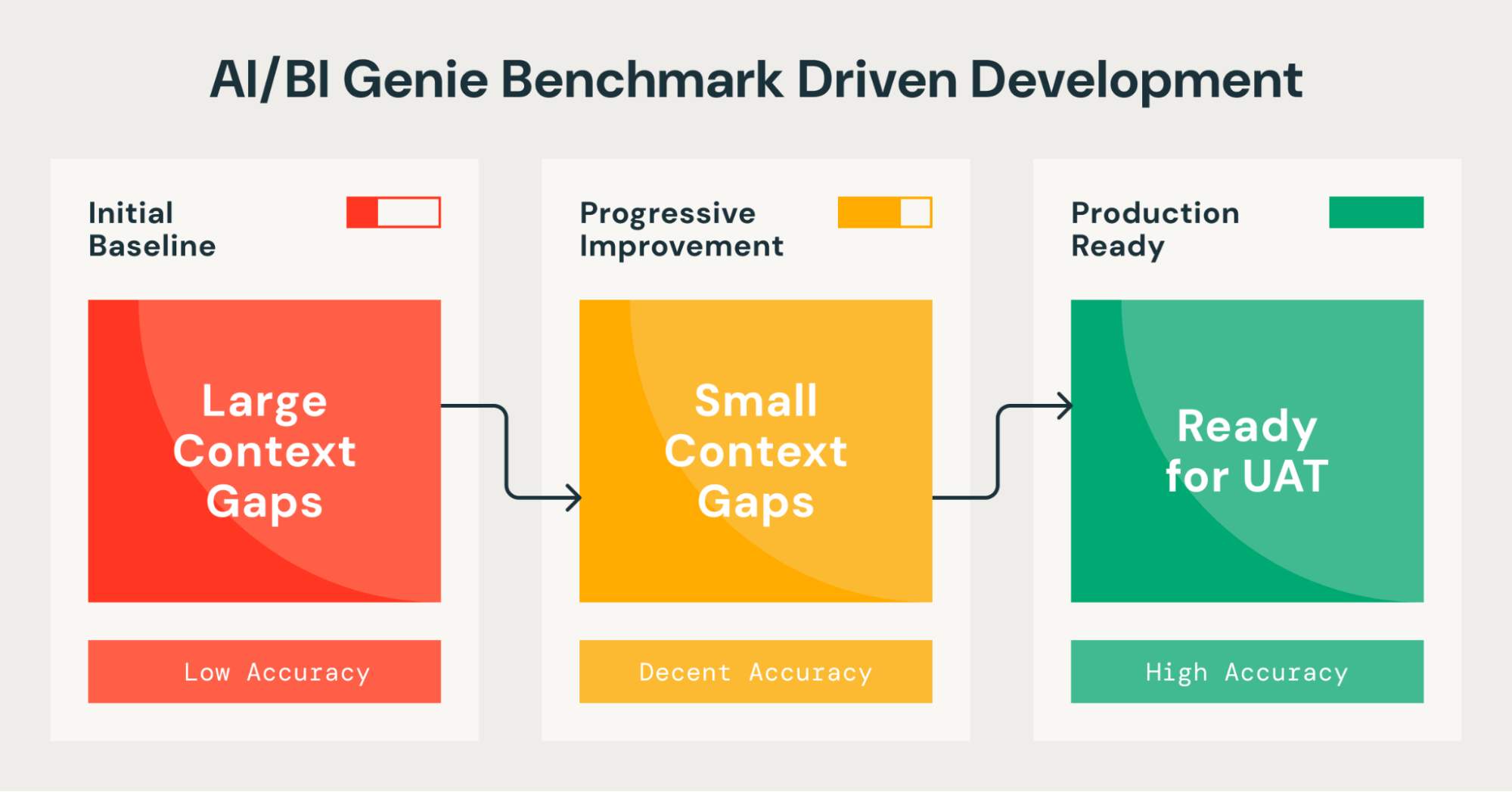

Databricks outlines how its Genie natural-language analytics feature uses a 13-question benchmark suite to validate and improve answer accuracy for business users. In a marketing analytics example, baseline results returned zero correct answers due to poor table names and missing metadata; iterative fixes—renaming tables, improving Unity Catalog descriptions, and re-running benchmarks—produced systematic accuracy gains. The process aims to increase user trust in self-service analytics.

Scoring Rationale

Practical, vendor-backed guidance with actionable benchmarks and iterative fixes; limited novelty beyond Databricks-specific implementation and audience.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems