Data Journalists Treat Documents as Quantifiable Data

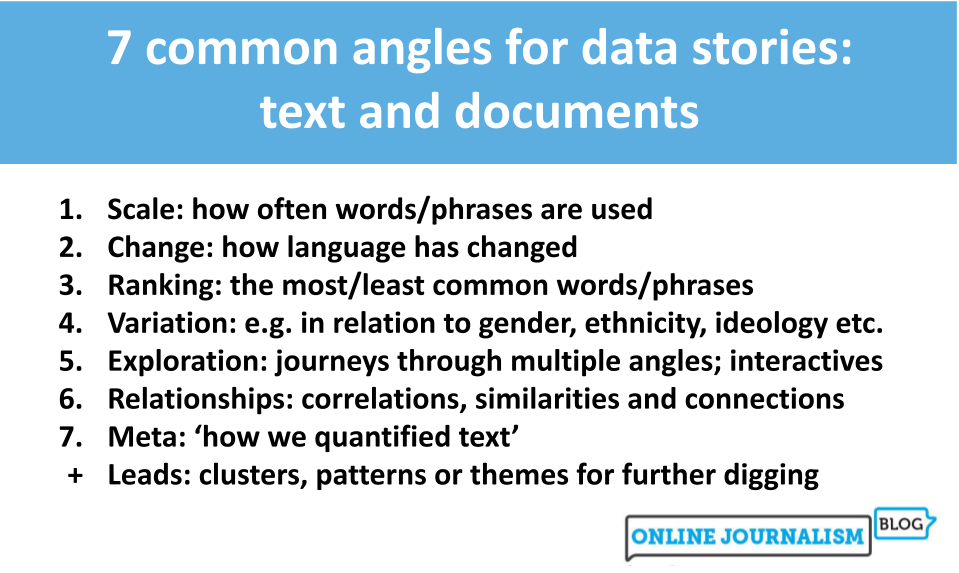

The Online Journalism Blog published a how-to explainer, "Words as data: how data journalists tell stories about documents and text," outlining techniques for treating documents and text collections as data. The article reports that exploratory formats dominate text-based data journalism, based on the sample the author reviewed, and highlights case studies including The Pudding, which classified text from over a million speeches (code shared in a repository), as well as projects from Quartz, Sueddeutsche Zeitung, and The Outlier. The piece surveys quantification approaches such as classification and thematic coding and notes that stories about language often focus on variation and change. The article is positioned as a practical guide for journalists and practitioners interested in turning documents into analyzable datasets.

What happened

The Online Journalism Blog published an explainer titled "Words as data: how data journalists tell stories about documents and text" that surveys methods and examples for treating documents and text as data. The article reports that exploratory feature formats are the most common structure in the sample the author reviewed. It cites The Pudding's project classifying text from over a million speeches, and references related work from Quartz, Sueddeutsche Zeitung, and The Outlier as illustrative examples.

Editorial analysis - technical context

The article emphasises common text-as-data techniques such as classification, thematic coding, and simple quantification (percentages of topic prevalence, speaker-level breakdowns). Industry-pattern observations: projects that convert documents into numeric features typically involve iterative labeling, model validation, and careful choices about aggregation and sampling to avoid misleading headlines.

Context and significance

Industry context: For data journalists and practitioners, text-based projects combine tasks from natural language processing and data storytelling, including transparency about labeling, reproducibility of code, and clear explanation of classifier limits. The explainer highlights that the richness of text often leads authors toward exploratory narratives rather than single-point revelations.

What to watch

Observers should watch whether more text-as-data projects publish code and annotation schemas, how outlets document classifier performance and bias, and whether interactive presentations that let readers probe text classifications become more common.

Scoring Rationale

Practical guide for practitioners doing text analysis, useful but not a research or tooling breakthrough. Relevant for data journalists and applied NLP teams.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems