Claude Accelerates Learning Python for Developers

Anthropic's Claude transformed a seasoned writer's Python learning workflow by replacing the chaotic search-and-solve loop with on-demand, tutor-style guidance. The author found that Claude provides tailored examples, stepwise debugging help, and explanations at the right abstraction level, reducing time spent hopping between Stack Overflow threads and documentation. For practitioners, this is a reminder that large language models can be used as interactive learning tools to accelerate skill acquisition, prototype ideas, and debug code live. The piece recommends integrating Claude into study routines for targeted practice, iterative feedback, and more engaging exercises compared with passive tutorials.

What happened

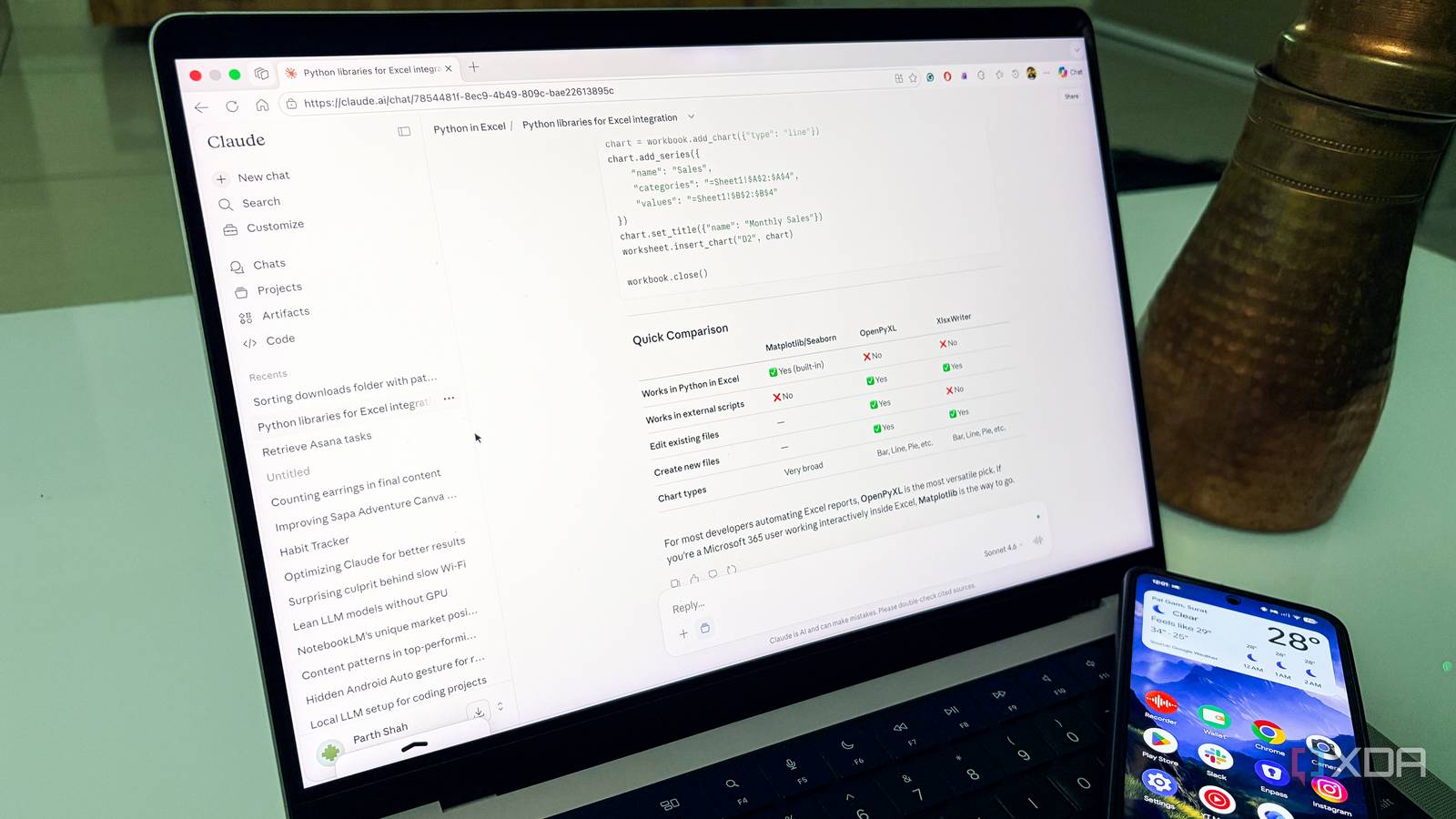

Anthropic's Claude became the author Parth's de facto personal tutor for learning Python, replacing a chaotic workflow of multiple tabs, Stack Overflow threads, and static tutorials. The shift removed repetitive friction, produced examples keyed to the author's interests, and made debugging and concept review faster and more focused.

Technical details

The practical benefits described are pedagogical and interactional rather than architectural. Claude functions as an interactive REPL-style helper that can:

- •generate context-specific code snippets and minimal reproducible examples

- •explain errors and propose stepwise fixes tuned to the learner's level

- •create incremental exercises, analogies, and alternative implementations

These capabilities rely on the model's contextual understanding and few-shot prompting, not on new language features or custom training. The piece emphasizes iterative prompting and asking for progressively simpler or more detailed explanations as the core technique.

Context and significance

This account highlights a growing use case for foundation models: adaptive, one-on-one tutoring for programming. For developers and educators, the takeaway is that LLMs can shorten the feedback loop between encountering a bug and understanding the concept behind it. That reduces cognitive overhead compared with searching fragmented resources and cobbling together answers from multiple threads. The report aligns with broader trends where models augment learning workflows, accelerate onboarding for new libraries, and serve as low-cost pair programmers for prototyping.

What to watch

Pay attention to how you prompt, how you verify code outputs, and how you combine Claude responses with static tests and linters. The benefits are real, but practitioners must still validate edge cases, runtime behavior, and performance implications before deploying code generated during tutoring sessions.

Scoring Rationale

The story signals a useful developer workflow improvement, showing practical pedagogical value from LLMs, but it is a single-user experience rather than a technical breakthrough. It is relevant to practitioners adopting LLMs for learning and prototyping, hence a mid-tier impact.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems