Binghamton Robot Dog Uses GPT-4 to Guide Visually Impaired

Binghamton University researchers led by Shiqi Zhang built a robotic guide-dog prototype that uses GPT-4 voice interaction to assist indoor navigation for visually impaired users. The system combines conventional robotic sensing and route planning with an LLM-driven conversational layer that offers pre-trip route explanations and continuous "scene verbalization" during travel. In a preliminary study with seven legally blind participants in a multi-room office environment, users favored the hybrid approach-route planning plus live narration-and rated the system on helpfulness, usefulness, and ease of communication. The team published methodology and evaluation details in an arXiv preprint and used GPT-4 both as the runtime conversational engine and, in some automated evaluations, to simulate user interaction.

What happened

Binghamton University's Autonomous Intelligent Robotics work, led by Shiqi Zhang, produced a robotic guide-dog prototype that integrates a GPT-4 conversational layer with onboard perception and navigation to support indoor mobility for visually impaired users. The system is designed to go beyond simple point-to-point guidance: it pre-suggests route options with estimated travel times and narrates environmental context in real time-what the researchers call "scene verbalization." A small user study (seven legally blind participants) in a multi-room office environment evaluated subjective measures including helpfulness, usefulness, and ease of communication; participants preferred the combined route-planning explanation and live narration approach.

Technical context

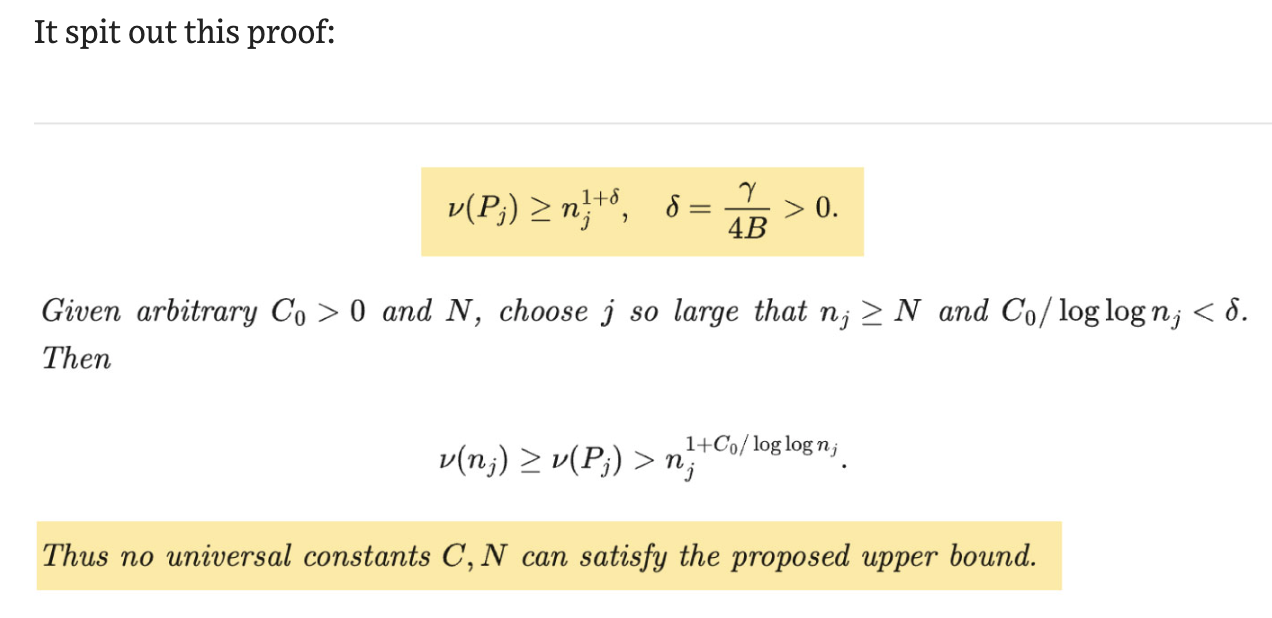

This project combines two technical stacks that are now converging in robotics: established robotic perception and mapping for safe navigation, and large language models (LLMs) for natural, flexible dialogue. The team uses GPT-4 as the conversational and decision-support layer to translate perception outputs into human-directed explanations and to accept voice commands. The arXiv paper ("Towards Intelligent Robotic Guide Dogs with Verbal Communication") documents system architecture, evaluation methodology, and experiments; it also reports using GPT-4 in simulation to model a visually impaired user during parts of the evaluation pipeline.

Key details from sources

- •Lead researcher Shiqi Zhang and his team implemented pre-trip route explanation (multiple candidate routes, estimated times) and continuous scene verbalization that warns users of corridor length, obstacles, and landmarks while moving. (Digital Trends; Binghamton press release)

- •The prototype was validated with seven legally blind participants navigating a large, multi-room office environment; participants completed questionnaires rating the system on helpfulness, usefulness, and communication ease and showed a clear preference for the hybrid explanatory+verbalization approach. (Digital Trends; Binghamton)

- •The work is available as an arXiv preprint. The authors describe both live user tests and automated evaluations; they used GPT-4 to simulate a visually impaired user in some experiments to augment testing coverage. (arXiv; Newswise PDF)

Why practitioners should care

This project is a concrete, recent example of using an LLM as the human-facing abstraction layer in a closed-loop robotic system rather than as a backend documentation or code-assistance tool. That raises several immediate practical topics for robotics and ML engineers: latency and availability (GPT-4 typically runs as a remote service), runtime safety and hallucination management (verbal outputs must not misrepresent obstacles or safe actions), evaluation methodology for HRI systems that combine subjective and automated measures, and the use of LLMs to generate synthetic user behavior for testing. The prototype illustrates how conversational LLMs can increase situational awareness for users and change the interaction paradigm for assistive robots-from reactive guidance to collaborative navigation planning.

Limitations and caveats

The user study is small and conducted in a controlled indoor environment; results do not yet speak to long-term reliability, outdoor navigation, or performance under network loss. Reliance on GPT-4 introduces operational dependencies (cost, latency, model behavior updates) and safety risks from hallucinated or imprecise language. The team's use of GPT-4 for simulated-users in evaluation is a practical expedient but should be corroborated with broader human-subject testing for deployment-grade validation.

What to watch

- •Larger, real-world trials with diverse mobility contexts (outdoor, crowded spaces) and longer-term deployment.

- •Strategies to reduce LLM dependence (on-device models, constrained response templates, verification layers) to improve safety and offline resilience.

- •Standardized evaluation protocols for LLM-enabled assistive robots that measure both navigational safety and communication quality.

- •Regulatory and accessibility pathways for assistive robotics that combine AI-driven language with physical guidance.

Scoring Rationale

The work demonstrates a meaningful research direction-integrating LLMs with robotic perception for assistive navigation-but remains an early, small-scale prototype. Practitioners should follow technical and safety implications.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems