Atlassian launches DX features for AI-native engineering

Per an Atlassian blog post, the DX team released four new features aimed at helping engineering organisations adopt AI-native workflows. The launches include an AI chat interface for querying internal data, AI Code Insights (which surfaces AI-generated code attribution and a set of reports on AI usage), proactive SLA-based alerts, and an Agent Experience metric that gives an organisation-level agent effectiveness score, filterable by team. The AI Code Overview report buckets SDLC metrics by percentage of AI in each pull request. Atlassian frames these additions as tools to measure where AI helps and where it introduces gaps across the software delivery lifecycle.

What happened

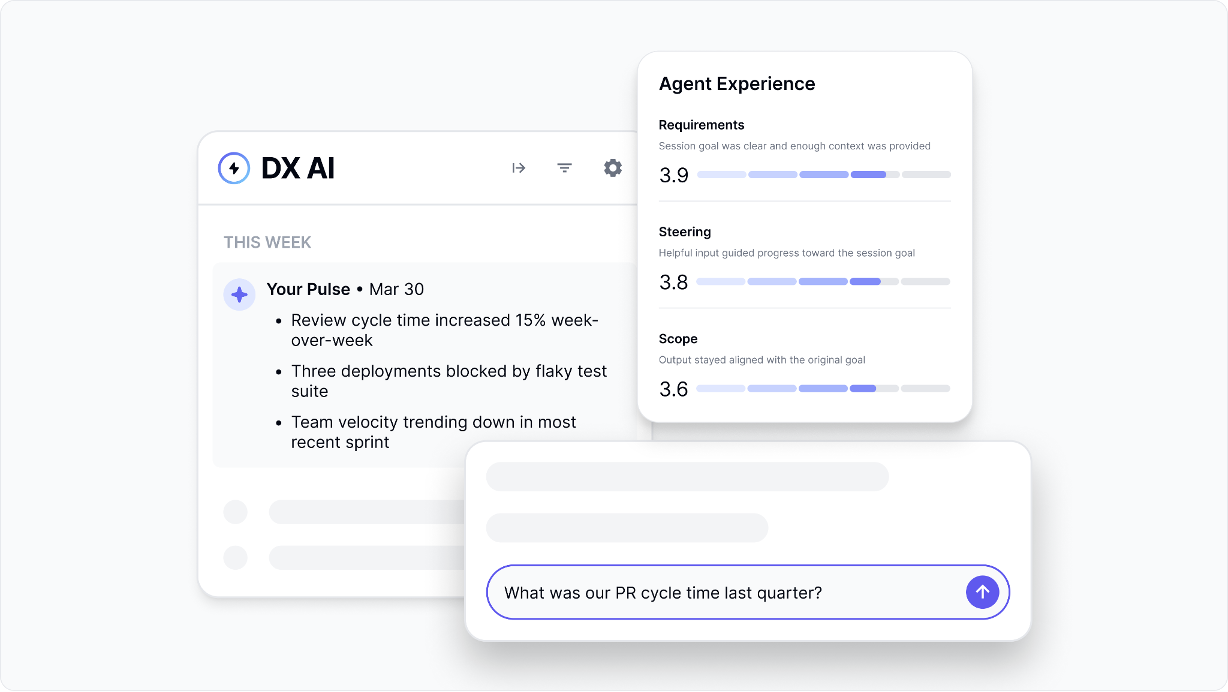

Per an Atlassian blog post from the DX team, Atlassian released four major features intended to improve visibility and operational control as engineering teams adopt AI assistants. The announced features are an AI chat interface for interacting with organisation data, AI Code Insights (a set of reports exposing AI-generated code and usage patterns), proactive SLA-based alerts, and an Agent Experience metric that scores agent effectiveness across teams.

Technical details

Per the blog post, AI Code Insights comprises three main reports. The AI Code Overview report buckets SDLC metrics by the percentage of AI in each PR, AI-generated code attribution shows which commits and PRs contain AI-generated code, and Agent Experience insights surface agent-session signals such as missing context or ambiguous instructions. The Agent Experience capability provides an overall agent effectiveness score filterable by team. The release also includes proactive alerts tied to SLAs and a conversational interface for querying internal data, according to Atlassian.

Industry context

Engineering organisations attempting to measure AI tool impact commonly lack direct telemetry linking AI outputs to delivery outcomes. Observable signals such as commit-level attribution, token spend, and agent-session diagnostics are emerging patterns vendors use to close that gap. Practitioners evaluating similar tooling typically focus on integration with CI/CD, signal fidelity, and the noise-to-action ratio for alerts.

What to watch

adoption and usage metrics (AI-generated-code percentage by team), correlations between AI-generated code and code quality or review cycles, the stability of agent effectiveness scores over time, and how SLA-based alerts integrate into existing incident and SRE workflows.

Scoring Rationale

Notable product launch for engineering teams that integrates AI usage telemetry into SDLC tooling, offering practical observability; valuable to practitioners measuring AI impact but not a frontier-model or infrastructure breakthrough.

Practice with real SaaS & B2B data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all SaaS & B2B problems