Artemis II Photographs Demonstrate Human Storytelling Advantage

The four-person Artemis II crew captured images and direct observations from the lunar flyby that emphasize human visual judgment and narrative framing. Trained astronaut photography produced images that convey context, color nuance, and positional perspective in ways experts say current cameras and autonomous systems do not. The mission photos sparked widespread social sharing, which in turn generated a flood of AI-generated and edited fakes. Fact-checkers and forensic tools, including SynthID, flagged many manipulated images while NASA published an official gallery of authentic mission photos. For practitioners, the episode highlights persistent gaps in automated imaging, the growing role of provenance and detection tools, and the need for better metadata and human-in-the-loop verification pipelines.

What happened

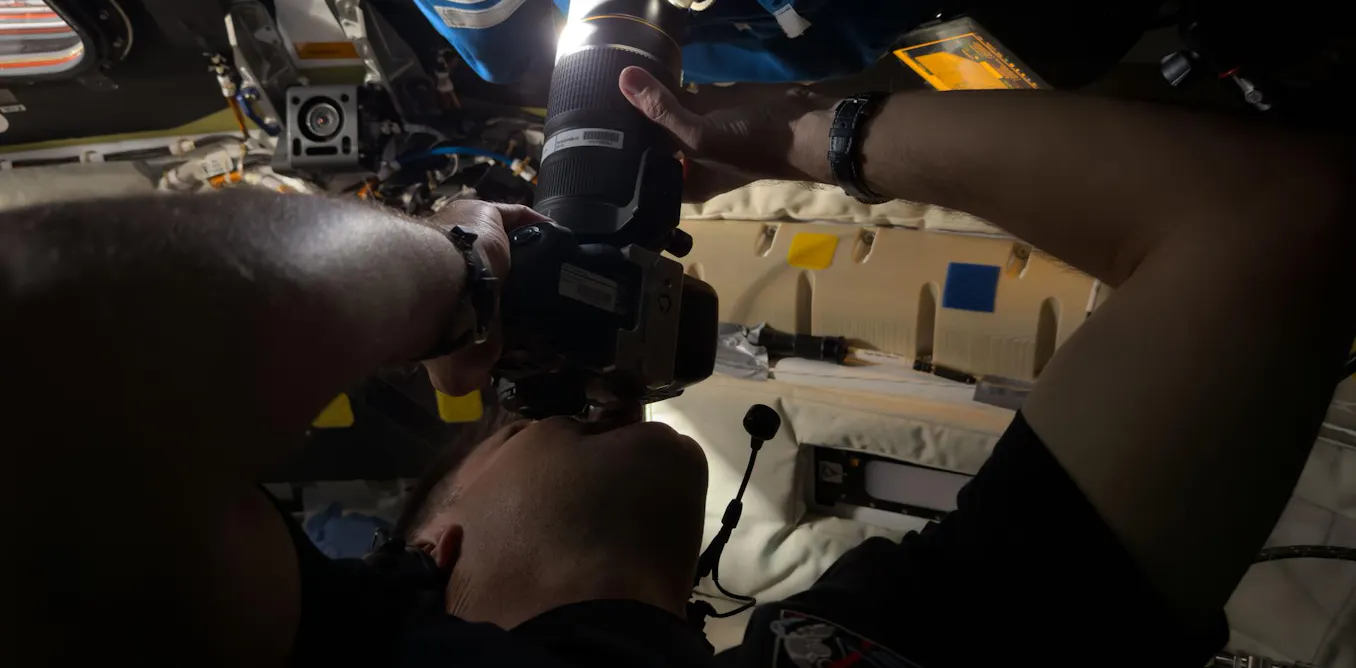

The four-person Artemis II crew, aboard the Orion spacecraft, produced a set of photographs and real-time human observations during their April 2026 lunar flyby that combined raw imagery with storytelling context. Crew members including Reid Wiseman and Jeremy Hansen captured views of the far side of the Moon and Earth through spacecraft windows and camera shrouds, yielding images and verbal descriptions that experts call uniquely informative and expressive. NASA released an official image gallery while social platforms were simultaneously flooded with visually convincing AI-generated fakes that fact-checkers and forensic services debunked.

Technical details

The photographic value here is not just resolution. Practitioners should note the specific human contributions that matter:

- •Perceptual judgment: trained astronauts made on-the-fly framing and timing choices that prioritize narrative moments over automated telemetry-driven capture

- •Color and nuance: human observers reported subtle chromatic variations in regions like the Orientale basin that raw camera captures and automated pipelines may compress or miss

- •Contextual description: crew verbal observations added geologic, directional and temporal context that pixel data alone cannot provide

Experts compared human vision to spacecraft imaging systems and emphasized how the human visual system integrates attention, memory, and domain knowledge. Fact-checkers at Snopes, AFP, and PolitiFact cataloged multiple cases of AI-generated or edited images falsely attributed to Artemis II. Detection tools, including SynthID, identified telltale signs and invisible watermarks in some fakes, and NASA made verified originals available in an official repository to simplify provenance checks.

Context and significance

This is a case study of two converging trends. First, domain experts and trained humans still add high-value signals to observational science by selecting what to capture and how to describe it. Second, the ubiquity and improving realism of AI image generation have raised the operational cost of managing visual provenance in high-profile missions. The Artemis II episode echoes past milestones such as Apollo 17's "Blue Marble" in cultural impact, but it unfolds in an environment where adversarial and mistaken imagery spreads instantly.

For data scientists and ML engineers the technical takeaways are clear: improving automated imaging is not just about pixels and model capacity, it is also about metadata, provenance, and human context. Practical levers include stronger capture-side metadata, embedding authenticated sensor signatures, and integrating human annotation and voice transcripts with imagery so downstream models and consumers can disambiguate source and intent. The use of SynthID and similar detection systems demonstrates utility but also limits; detection is probabilistic and must be combined with organizational provenance policies.

What to watch

Expect increased emphasis on capture provenance standards, broader adoption of sensor-level authentication, and hybrid pipelines that keep humans in the loop for high-value observational tasks. Also watch for policy and product responses from platforms that host images, and improvements in forensic tooling to scale verification in near real time.

Scoring Rationale

This story matters because it highlights real operational gaps where AI-generated imagery and misinformation intersect with high-value observational data. It is not a frontier model or a market-moving event, but it is a practical, timely signal for engineers building provenance, detection, and human-in-the-loop systems.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems