Anthropic's Claude Code Leak Reveals Engineering Tradeoffs

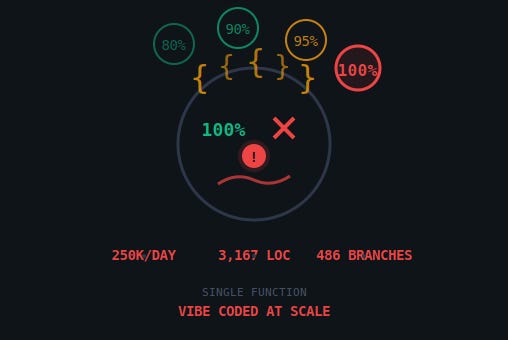

Anthropic unintentionally published the full TypeScript source of Claude Code, exposing 512,000 lines, 1,906 files, and implementation details that undercut public claims about AI-written code. The leak shows a vast engineering runtime around the LLM: only 1.6% of lines directly call the model, while the rest implement query routing, caching, permission controls, multi-agent orchestration, and UI/state glue. Reviewers found a single function with 3,167 lines, regex-based sentiment checks, and a documented bug that burned roughly 250,000 API calls daily. The codebase also revealed 44 internal feature flags, including KAIROS and ULTRAPLAN, plus cost-control mechanisms that make cache management critical. For practitioners this is both a cautionary tale about operational complexity and a public blueprint for production agent architectures.

What happened

Anthropic's flagship coding assistant, Claude Code, leaked when a source map was accidentally published in an npm package, exposing roughly 512,000 lines of TypeScript across 1,906 files. The dump included an enormous print.ts file with a single function spanning 3,167 lines, regex-based sentiment detection, comments documenting a bug that burned about 250,000 API calls per day, and 44 compile-time feature flags such as KAIROS, ULTRAPLAN, and COORDINATOR_MODE.

Technical details

The public code shows that the LLM is the core compute primitive, but not the product. Only 1.6% of the repository directly calls the model API; the other 98.4% is engineering scaffolding that addresses runtime reliability, cost, and UX consistency. Key architectural elements visible in the leak include:

- •a query engine and tool system that mediate model calls

- •a cache layer with a hit/miss cost factor of roughly 10x, making cache design critical

- •permission and security controls that gate tool execution

- •a multi-agent orchestration and coordination protocol

The code includes pragmatic engineering patterns rarely visible in papers: three-layer memory management, dual parser strategies for recovery, anti-distillation guards, explicit backoff/retry and failure-repair loops, and heavy client-side state synchronization. Implementation choices are sometimes crude: print.ts exhibits deep nesting and monolithic functions, sentiment detection uses simple regexes matching tokens like "wtf" or "shit", and an Axios-based HTTP layer surfaced in multiple places. The packaging failure traces to the Bun build tool generating source maps by default and a missing entry in .npmignore, which allowed source maps to be published.

Context and significance

The leak reframes the industry conversation about AI-written code. Public statements from Anthropic engineers and executives, including Boris Cherny's claim that "100% of my contributions to Claude Code were written by Claude Code," promised a future where models produce most product code. The code shows how much of the end product is human-engineered infrastructure rather than model output. That engineering is itself a strategic moat: production-grade agent systems require orchestration, cost-control, and safety plumbing that will be hard for competitors to replicate quickly.

At the same time the leak is an engineering indictment: documented technical debt shipped to customers, brittle heuristics for user intent and sentiment, and a wasteful API-burn bug all indicate fast iteration and prioritization of product features over maintainability. For teams building client-side LLM applications, the leak is a rare, detailed case study of the trade-offs between rapid feature velocity and long-term operational complexity.

What to watch

Practitioners should audit caching and retry semantics, rethink brittle heuristics like regex sentiment detectors, and treat source-map/packaging hygiene as a first-class security control. Competitors and open-source projects will absorb the architecture blueprint quickly; expect forks and reimplementations that distill the engineering patterns without Anthropic's specific technical debt.

Scoring Rationale

The leak gives practitioners a rare, concrete blueprint for building production agent systems, which matters for engineering strategy and competition. It is notable but not a frontier-model breakthrough, and the story is several days old, reducing immediate impact.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems