Anthropic Secures Massive Google TPU Computing Capacity

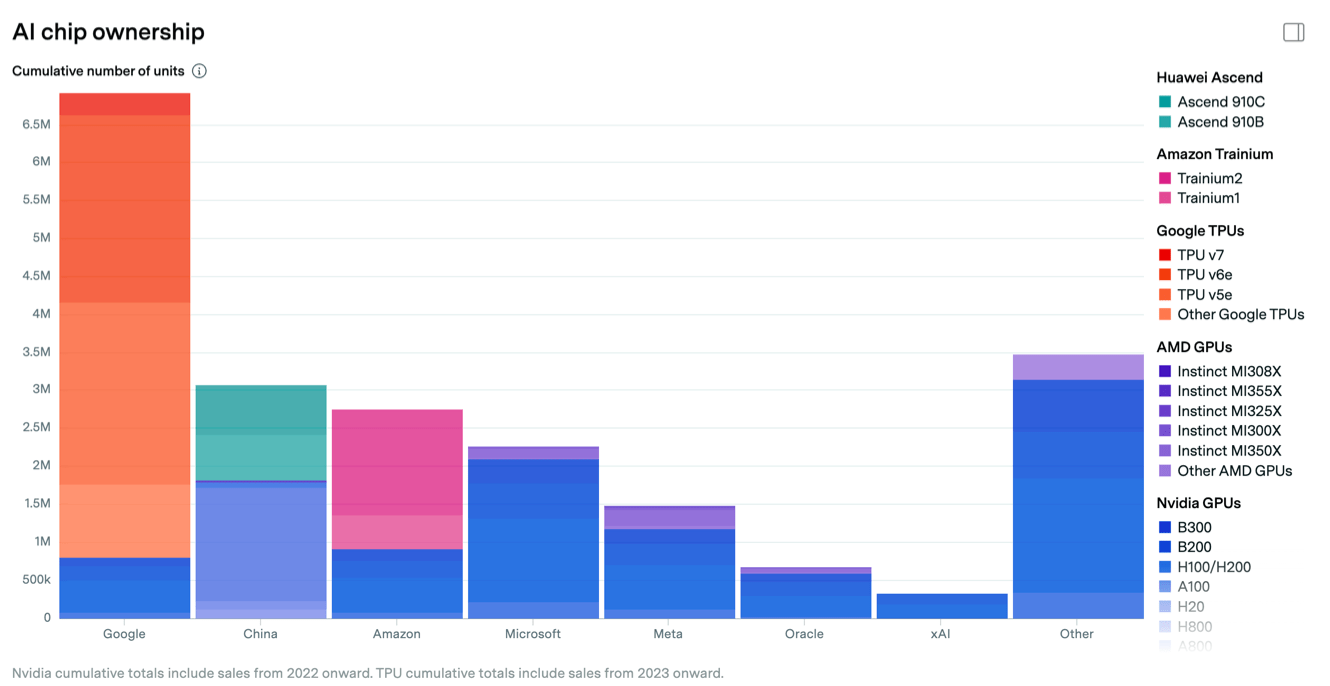

Anthropic has expanded a strategic compute partnership with Google Cloud (and Broadcom), securing access to Google Cloud TPUs at a scale reported in the gigawatt range. Public reporting and Anthropic’s announcement indicate capacity measured in the low millions of TPU chips (reports cite up to one million TPUs) and aggregate power reservations described as 1 GW+ and, in some reports, multiple gigawatts (figures up to ~3.5 GW appear in secondary accounts). The deal relieves Anthropic’s immediate training and inference bottlenecks for its Claude family while validating Google’s TPU-as-a-cloud-asset. For practitioners, the agreement resets expectations about large-model infrastructure supply, vendor bargaining power, and how proprietary accelerators factor into future model scale and economics.

What happened

Anthropic expanded its infrastructure partnership with Google Cloud (with Broadcom involvement reported), securing access to Google Cloud TPUs at massive scale to power next-generation Claude training and inference. Public statements and multiple industry reports quantify the commitment in the gigawatt range and reference up to one million TPUs or similar large-capacity figures, although exact vendor-level contract terms vary across sources.

Technical context

The deal centers on Google’s proprietary TPU accelerator fleet and on-premise / hyperscaler-scale provisioning via Google Cloud. TPUs are ASIC-based accelerators optimized for transformer-style training and large-batch matrix math, offering a different cost-performance profile than general-purpose GPUs. At gigawatt-scale reservations, the agreement is not merely a VM purchase but an industrial-grade commitment: power provisioning, interconnect capacity, physical racks/pods, and close co-engineering between cloud, silicon (Broadcom components reported), and the model developer.

Key details from sources

- •Anthropic’s own announcement framed the change as an expansion of TPU use and cloud services with Google and Broadcom (Anthropic).

- •Independent reporting frames the commitment as “over a gigawatt” or “1 GW+” of capacity, with several outlets describing deployments in the millions of TPU units and others reporting figures as high as ~3.5 GW in aggregated capacity (marketwatch, cloudwars, telecomreviewamericas, firstonline).

- •Coverage emphasizes that Anthropic will use the capacity to train and operate larger Claude models, and that the arrangement validates Google’s TPU business as a competitive asset beyond its internal use (aibusiness, marketwatch, medium.datadriveninvestor).

Why practitioners should care

This is a substantive supply-side shift for large-model development. Access to TPU capacity at gigawatt scale directly affects the economics and feasibility of training next-generation, very-large transformer models: it reduces queueing risk, shortens iteration cycles, and can materially change cost-per-token and time-to-train for Anthropic. For ML engineers and infra architects, it signals that proprietary ASIC fleets (TPUs) are becoming available to third-party model vendors at scale — a market dynamic that changes procurement, benchmarking, and architecture choices. For platform and ops teams, the deal highlights the operational surface area of hyperscale TPU deployments: power contracts, interconnect planning, and software stack compatibility (TPU runtimes, XLA, TPU Pod orchestration).

Strategic implications

- •For Google: validation of TPU-as-a-service and an enterprise reference customer that helps monetize a differentiated silicon stack versus GPU-centric clouds.

- •For Anthropic: the immediate compute relief enables faster model scaling and product cadence, but increases dependency on Google Cloud and its TPU roadmap.

- •For the ecosystem: other model developers and cloud players must reassess capacity planning and negotiation posture; large, exclusive or semi-exclusive capacity commitments can compress available public GPU/accelerator capacity and affect pricing.

What to watch next

Confirm contract duration and exclusivity clauses; watch for published throughput/Pod-level metrics and which TPU generation is being deployed; track Broadcom’s concrete hardware role (interconnects/switch silicon); monitor whether Google offers similar large allocations to competitors or keeps preferential terms; and observe whether regulators or large enterprise customers raise concerns about vertical integration and market power.

Scoring Rationale

Large-scale compute commitments directly affect model training feasibility, cloud competition, and infrastructure economics — core concerns for ML practitioners. The story is fresh but subtracts a small freshness penalty.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems