Zalando Migrates Fraud ML Pipeline to SageMaker

Per a Zalando engineering post published Feb 16, 2021, the team reworked a legacy fraud-risk ML system and adopted Amazon SageMaker for real-time inference. The post lists key pain points in the prior Scala and Spark monolith, including high memory usage, latency spikes, slow instance startup, and tight coupling between feature preprocessing and training. The engineering post states a latency requirement that 99.9% of responses be returned "in the order of milliseconds" and that the busiest model must handle hundreds of requests per second. Third-party coverage from ZenML highlights use of the SageMaker inference pipeline and a dual-container approach that chains containers to handle request processing.

What happened

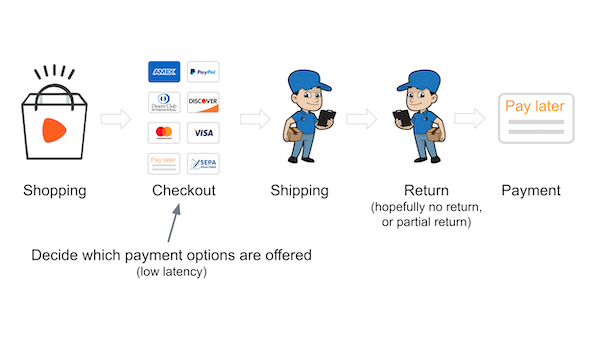

Per the Zalando engineering post dated Feb 16, 2021, the team reworked a legacy fraud-detection ML pipeline and migrated parts of the serving stack to Amazon SageMaker. The post documents prior architecture choices going back to a 2015 migration from Python and scikit-learn to Scala and Spark. The authors list operational problems with the existing system, including heavy memory use, latency spikes, slow instance startup, and a monolithic design that couples feature preprocessing and model training.

Technical details

The engineering post preserves the existing JSON API while setting strict operational targets, stating that 99.9% of responses should be returned "in the order of milliseconds" and that the busiest model should handle hundreds of requests per second, per the post. External coverage from ZenML reports that the team used SageMaker inference pipeline capabilities to chain multiple Docker containers, and that a dual-container arrangement was used to separate request preprocessing from model scoring during inference.

Editorial analysis - technical context

Companies decomposing monolithic Spark stacks often move preprocessing off the training cluster and adopt managed inference services to reduce operational burden and mitigate latency spikes. Managed inference pipelines, container chaining, and lightweight scoring containers are common patterns practitioners use to meet sub-second SLAs without reengineering training workflows.

Context and significance

Industry observers value case studies that show concrete tradeoffs between custom on-prem or Spark-based systems and cloud-managed inference. Per the Zalando post, the migration emphasizes runtime performance, operational simplicity, and onboarding speed as drivers for using managed services and platform tooling.

What to watch

Indicators readers can follow include latency percentiles after migration, instance cold-start times for the serving containers, how preprocessing is versioned separately from model artifacts, and whether the team reports cost or maintenance reductions. Reporting that shows end-to-end metrics post-deployment would make the case study more actionable for practitioners.

Scoring Rationale

This is a practical engineering case study demonstrating managed inference and pipeline decomposition, useful to practitioners planning migrations. The material is valuable but dated, which reduces immediate novelty and impact.

Practice with real Retail & eCommerce data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Retail & eCommerce problems