White House Considers Pre-Release Vetting for AI Models

Multiple outlets report the White House is discussing new executive actions that would create a government vetting regime for advanced AI models before public release, with reporting from Politico and The New York Times describing industry briefings and anonymous participants. National Economic Council Director Kevin Hassett said on Fox Business the administration is "studying possibly an executive order" to create a roadmap and a pre-release review process, per Politico and Fortune. Reporting by Fortune and The Washington Post ties the shift to security concerns raised by Anthropic's Mythos, which several outlets say exposed code vulnerabilities and accelerated deliberations. The White House has not issued a formal order; a spokesperson told Politico any official announcement would come from President Donald Trump and described current discussion as "speculation."

What happened

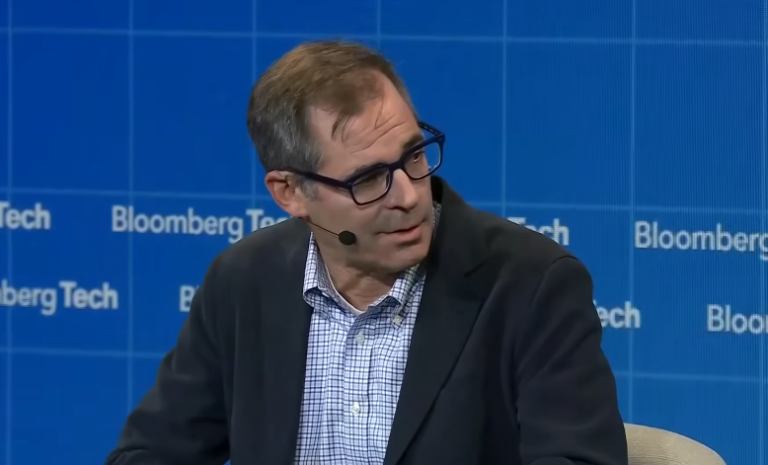

Multiple news organizations report the White House is weighing executive actions that would institute pre-deployment review of advanced AI systems. Politico reports administration discussions include an executive-order style vetting regime that could require AI developers to obtain government approval before releasing frontier models, citing seven industry and policy sources granted anonymity. The New York Times and The Wall Street Journal similarly report deliberations over pre-release scrutiny. National Economic Council Director Kevin Hassett said on Fox Business, "We're studying possibly an executive order to give a clear roadmap to everybody about how this is gonna go, and how future AIs that also potentially create vulnerabilities should go through a process so that they're released into the wild after they've been proven safe - just like an FDA drug," as quoted by Politico and Fortune.

Technical details

Editorial analysis - technical context: Reporting from The Washington Post and the Wall Street Journal identifies Anthropic's Mythos as a proximate trigger for the policy reappraisal, describing Mythos as a new generation of models that can find and exploit software vulnerabilities at speed. For practitioners, this trend highlights that frontier model capabilities now intersect more directly with offensive security tasks, increasing attention from national-security stakeholders and raising the bar for risk assessment around capability releases.

What sources say about oversight

Fortune reports the administration's renamed agency, the Center for AI Standards and Innovation (CAISI), has signed agreements with several frontier developers and has completed more than 40 evaluations of models, per an agency press release summarized by Fortune. Politico and The New York Times report the White House has engaged companies including Microsoft, xAI, and Google DeepMind to enable government evaluation of models for national-security risk ahead of release. Multiple outlets note these discussions represent a shift from the administration's earlier deregulatory posture.

Context and significance

Industry context

News coverage frames the apparent policy reversal as driven primarily by escalating cybersecurity and national-security concerns, rather than adoption of EU-style broad AI governance. For AI practitioners, the salient implication is that capability-driven risk, especially where models can discover or automate exploitation, is moving to the center of U.S. policy debates. That could change expectations for pre-release documentation, red teaming, and voluntary or mandated model handovers to government evaluators.

What to watch

Observers should track whether the White House issues a formal executive order, the legal or administrative mechanism used to implement vetting, and the scope of models deemed "frontier" or "high-risk." Also monitor any published criteria for evaluation, whether CAISI or a new body will operate reviews, and if participating companies publish guidance for defensible pre-release processes. Finally, watch for congressional reaction and potential litigation over compelled model disclosures or pre-release blocks.

Scoring Rationale

This is a potentially industry-shaking policy development: administrative vetting of frontier models would affect product release processes, security practices, and compliance expectations for AI developers. Multiple major outlets report active deliberations and a senior official quote, increasing immediacy and relevance for practitioners.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems