Veteran Developer Runs Transformer on 47-Year-Old PDP-11

Veteran Windows developer Dave Plummer demonstrated a working transformer called Attention 11 on a 47-year-old PDP-11 with a 6 MHz CPU and 64KB of RAM. The model, written in PDP-11 assembly by Damien Buret and demonstrated by Plummer, learns a structural task, reversing an eight-digit sequence, to expose the minimal algorithmic core of attention-based networks. The demo is intentionally small and slow but instructive: it shows that the mathematical primitives behind modern transformer models can be implemented and trained on extremely constrained hardware. For practitioners, the value is pedagogical rather than practical; the project clarifies how attention and sequence learning operate at the lowest level and highlights what scales up when moving to contemporary hardware and model sizes.

What happened

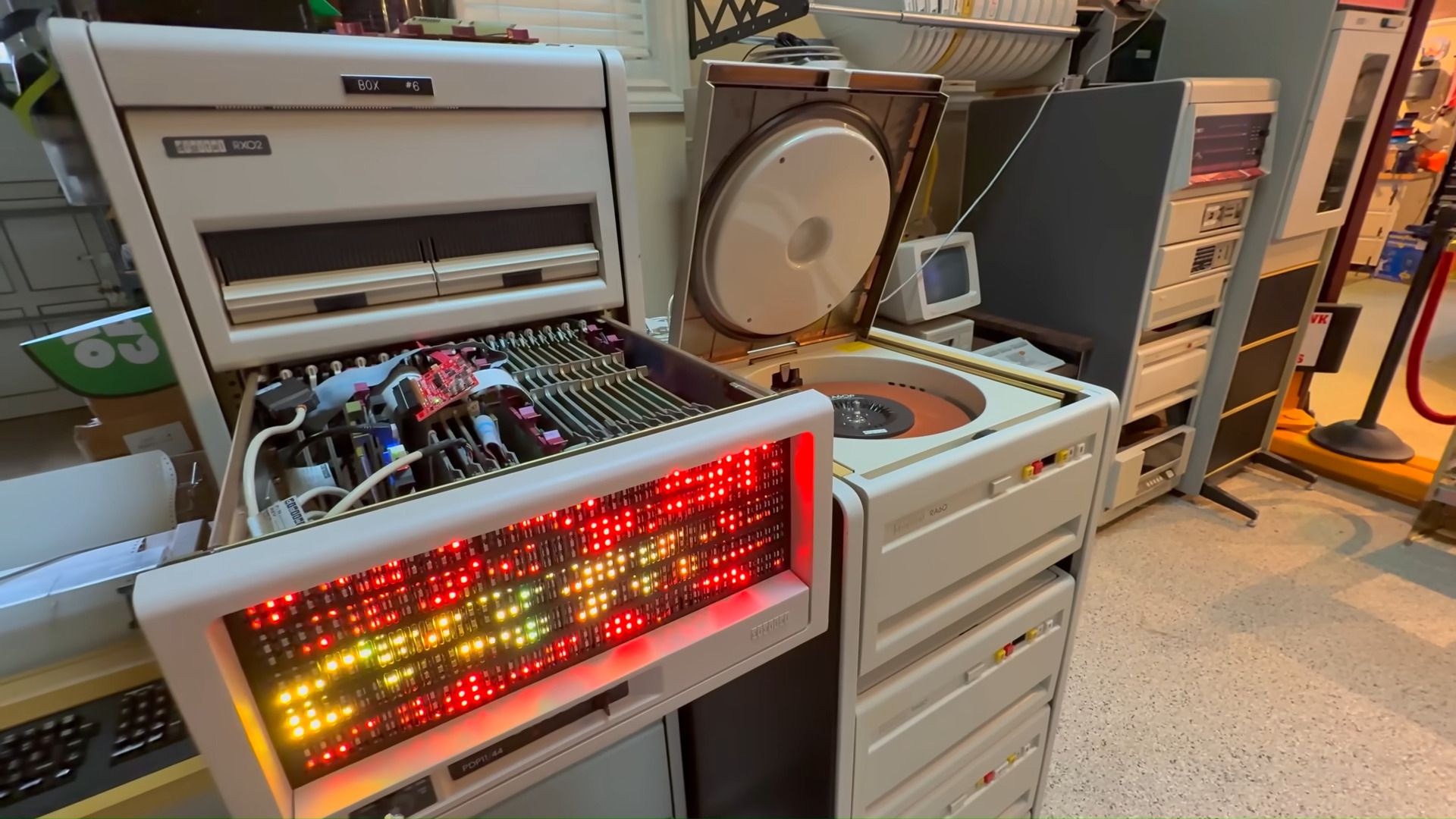

Veteran Windows developer Dave Plummer showcased a working transformer implementation on a 47-year-old PDP-11 running a 6 MHz CPU with 64KB RAM. The model, Attention 11, was written in PDP-11 assembly by Damien Buret and demonstrated training on a simple sequence-reversal task, an exercise designed to reveal the algorithmic essence of attention. Plummer framed the project as a way to demystify AI, calling it a demonstration that transforms are reducible to basic operations that fit on historical hardware.

Technical details

The demo implements the attention mechanism and training loop in low-level PDP-11 assembly, constrained by extremely limited compute and memory. Key practical constraints and techniques used include:

- •Memory frugality, keeping working state inside 64KB of addressable RAM

- •Extremely low-cycle arithmetic and control flow to fit within a 6 MHz CPU budget

- •A toy task, reversing an eight-digit sequence, that still requires learning structural generalization rather than rote memorization

The project does not claim parity with modern large language models. Instead, it isolates the core algorithmic components of transformers: attention score computation, state updates, and weight adjustment via gradient-like steps. Implementing these in assembly exposes the instruction-level costs and communication patterns that, when scaled, drive GPU and TPU architecture choices today.

Context and significance

This is a pedagogical and engineering curiosity rather than a production advance. The demo matters because it strips away ecosystem complexity and shows that the math behind attention is not magic. For practitioners, that perspective is useful: it clarifies why modern models demand massive memory bandwidth, parallelism, and specialized numerical formats when scaled. The project also serves as a reminder that algorithmic clarity can guide efficient implementations and microarchitecture-aware optimizations.

What to watch

Use this as an educational reference when teaching or reasoning about transformer primitives, memory and compute trade-offs, and the minimal requirements for sequence learning. The next steps for similar projects would be measured extensions: profiling instruction-level hotspots, evaluating latency and energy per operation, or implementing low-precision arithmetic emulation to bridge to modern accelerators.

Scoring Rationale

This is an instructive technical demo that demystifies transformer algorithms and highlights low-level costs, but it is not a new model or performance milestone. It is primarily educational for practitioners.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems