SWE-bench Updates Bash-Only Coding Leaderboard With New Model Rankings

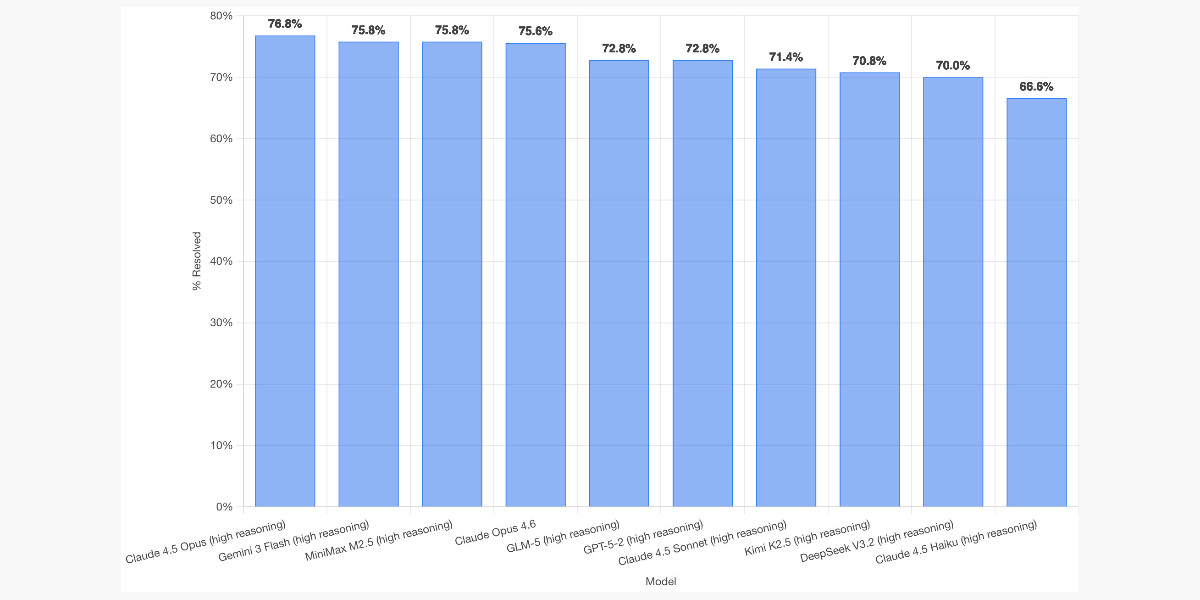

On 19 February 2026, SWE-bench published a fresh full run of its February 2025 'Bash Only' coding benchmark, evaluating models on 2,294 real-world problems drawn from 12 open-source repositories. Claude Opus 4.5 ranked first, followed by Gemini 3 Flash and MiniMax M2.5; OpenAI's GPT-5.2 placed sixth while GPT-5.3-Codex was absent, and the run used a uniform system prompt for fair comparison.

Scoring Rationale

Independent, uniform benchmarking increases comparability and practical utility for model selection, but the Bash-only workload limits broader coding generalization.

Practice with real Logistics & Shipping data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Logistics & Shipping problems