Students Use AI Tools To Boost Study Productivity

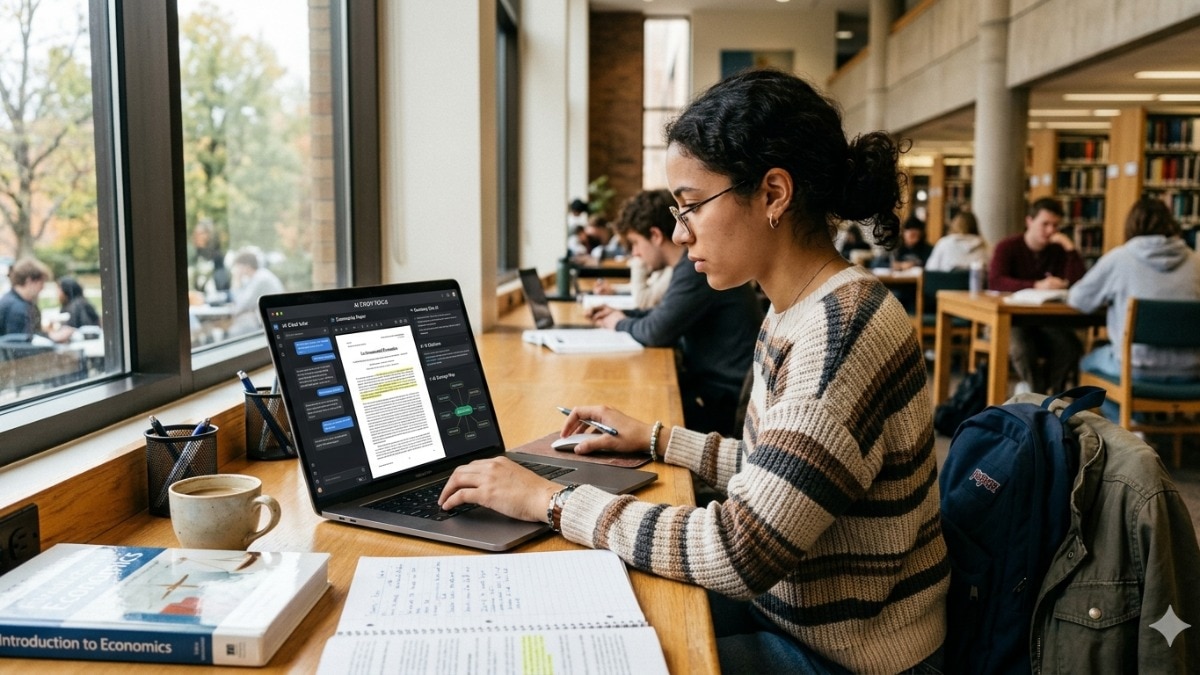

India Today reports that AI tools are increasingly part of student workflows in 2026, highlighting five utilities for study and coursework. The article lists ChatGPT (ChatGPT), Google Gemini (Gemini), Notion AI, Grammarly, and Quizlet AI as widely used or useful tools for brainstorming, summarising lectures, organising notes, improving writing, and adaptive practice, respectively, according to India Today. India Today also reports that experts cited in the piece urge responsible use of these tools. Editorial analysis: The story illustrates broader consumer adoption of large language models and productivity-integrated assistants, which raises practical questions about hallucinations, academic integrity, and data privacy for educational deployments.

What happened

India Today published a roundup on May 16, 2026, listing five AI tools students should know this year and describing common student use cases. India Today names ChatGPT (ChatGPT) as widely used for brainstorming, concept explanation, note drafting, language practice, and coding help. India Today describes Google Gemini (Gemini) as notable for integration with Google Workspace apps such as Docs, Gmail, and Sheets, and for summarisation and real-time research support. India Today lists Notion AI for organising class notes and project management, Grammarly for grammar and clarity in academic writing, and Quizlet AI for flashcards, practice quizzes, and adaptive learning features.

Editorial analysis - technical context

The tools referenced are manifestations of two converging trends in 2024-2026: wider availability of large language models (LLMs) as consumer-facing assistants, and tighter embedding of LLM functionality into productivity and learning platforms. For practitioners, this means more downstream apps will rely on pre-trained LLMs plus lightweight retrieval or fine-tuning layers, and adaptive-learning products will increasingly combine spaced-repetition algorithms with model-generated content. Observed patterns in similar product deployments include reliance on cached embeddings for search, server-side moderation to limit hallucinations, and incremental fine-tuning for domain relevance.

Editorial analysis - risks and operational considerations

Industry observers note that educational use cases intensify three operational trade-offs: model hallucination vs. helpfulness, privacy of student data vs. personalised support, and academic-integrity concerns vs. efficiency gains. For teams building or evaluating educational tooling, validation datasets, prompt-safety layers, and provenance metadata for generated answers are typical mitigations deployed across the sector.

What to watch

Indicators worth monitoring include deeper LLM integration into campus productivity stacks, adoption of provenance and citation features in study-assistant apps, university policy updates on AI-assisted work, and growth in tools that combine adaptive learning with model-generated content. Observers should also track vendor disclosures about training-data sources and model-evaluation metrics relevant to educational correctness.

For practitioners

Data scientists and ML engineers working on education products should prioritise measurable correctness (task-specific accuracy), clear provenance for generated content, and privacy-preserving architectures when handling student data. Industry tooling around watermarking, detection, and explainability will remain useful components in evaluation pipelines.

Scoring Rationale

The story documents broad consumer adoption of mainstream LLM-backed tools in education, which matters for practitioners building or evaluating learning products. It is not a frontier-model release or major policy event, so importance is moderate.

Practice with real Ad Tech data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Ad Tech problems