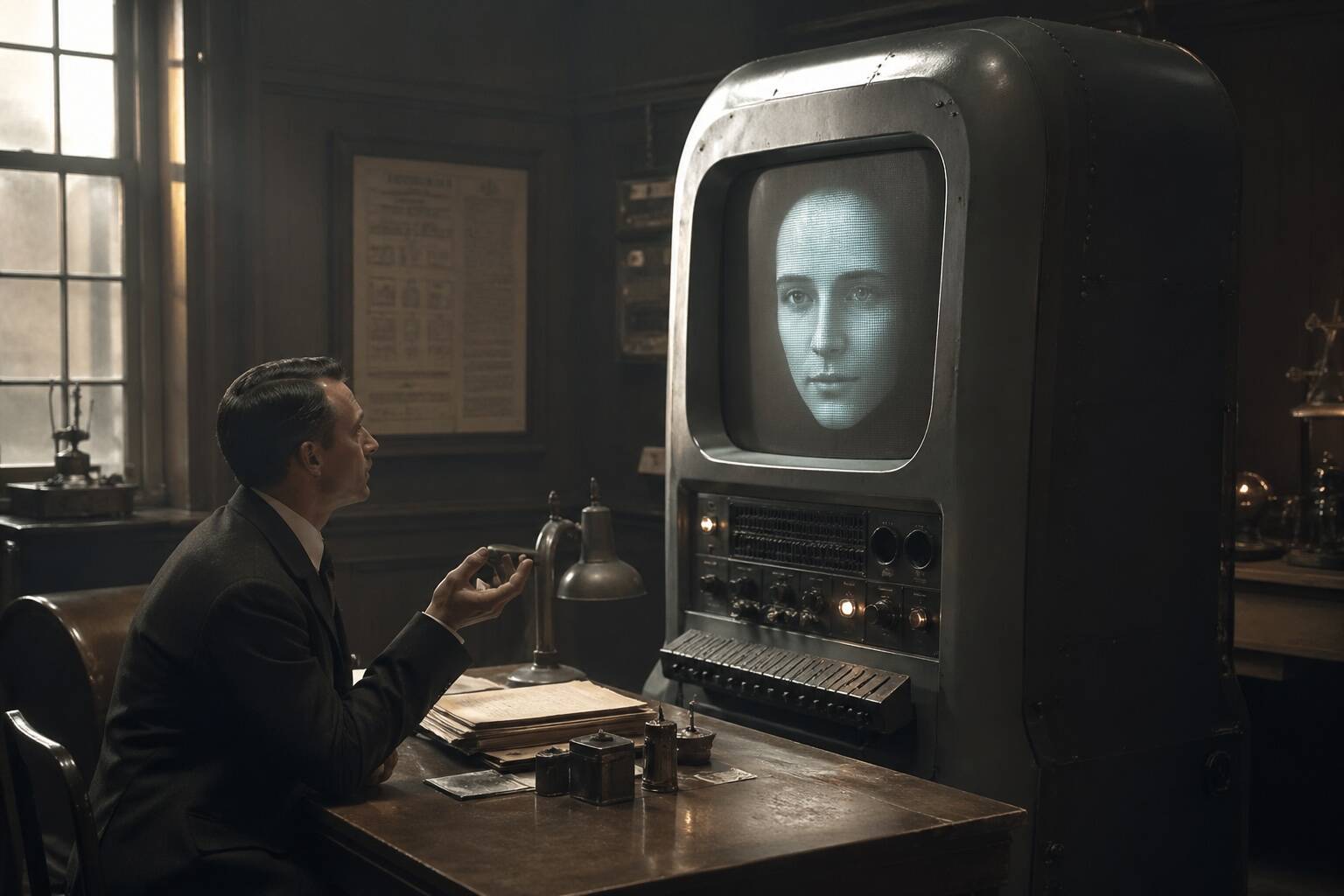

Researchers release Talkie vintage language model

Per The Register, a trio of AI researchers released a 13-billion-parameter language model called Talkie trained only on English-language material published before the end of 1930. The training corpus reportedly consists of digital scans of books, newspapers, periodicals, scientific journals, patents, and case law, according to The Register. The model thus lacks knowledge of events after 1930, so queries about World War II, the election of Franklin D. Roosevelt, or Amelia Earhart will be outside its training. The Register quotes the creators: "Talkie is the largest vintage language model we are aware of, and we plan to continue scaling significantly." Editorial analysis: this release emphasizes research into dataset boundaries and vintage corpora as a path to studying model behavior.

What happened

Per The Register, a trio of AI researchers released a 13-billion-parameter language model named Talkie that was trained solely on digital scans of English-language books, newspapers, periodicals, scientific journals, patents, and case law published before the end of 1930. The Register reports that the cutoff was chosen because 1930 is the current cutoff in the United States. The Register quotes the developers: "Talkie is the largest vintage language model we are aware of, and we plan to continue scaling significantly." The project writeup quoted in The Register also says, "These models are fascinating conversation partners ... but we are also excited by the possibility that the careful study of the behaviors and capabilities of vintage LMs will advance our understanding of AI in general."

Technical details

Per The Register, Talkie is a 13-billion-parameter model trained on a pre-1931 corpus composed of scanned print material. The publicly reported description emphasizes the corpus composition and the temporal cutoff rather than novel architecture or training techniques in the article. The Register also cites the project writeup referencing a proposed AGI test attributed to Google DeepMind co-founder and CEO Demis Hassabis that involves cutting a model's knowledge to 1911 for certain evaluations.

Industry context

Editorial analysis: Researchers and practitioners have increasingly used constrained or domain-limited corpora to surface how training data shapes model outputs, biases, and hallucination modes. Vintage models like Talkie provide a controlled setting to compare behavior against contemporary models, isolate temporal knowledge effects, and study historical language patterns without modern internet content confounders.

Context and significance

Editorial analysis: For interpretability and dataset-curation research, a deliberately time-limited model is a clean experimental variable. It reduces the search space for provenance analysis and makes some safety tradeoffs explicit by excluding later harmful or extremist content, while also removing useful factual knowledge. The Register frames Talkie as part of a small but growing set of vintage LMs; the article notes other vintage models exist and reports the authors consider Talkie the largest they know of.

What to watch

Watch for published evaluation results comparing Talkie to contemporaneous models on hallucination patterns, historical understanding, and linguistic drift. Also watch for any shared dataset documentation or benchmarks from the Talkie team that enable replication and cross-model comparisons.

Scoring Rationale

This is a notable research release that creates a useful controlled setting for studying training-data temporal effects and interpretability. It is not a frontier-model breakthrough but is practically valuable to researchers and practitioners focused on datasets and model behavior.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems