Researchers Introduce Cy-Trust Framework for Autonomous Networks

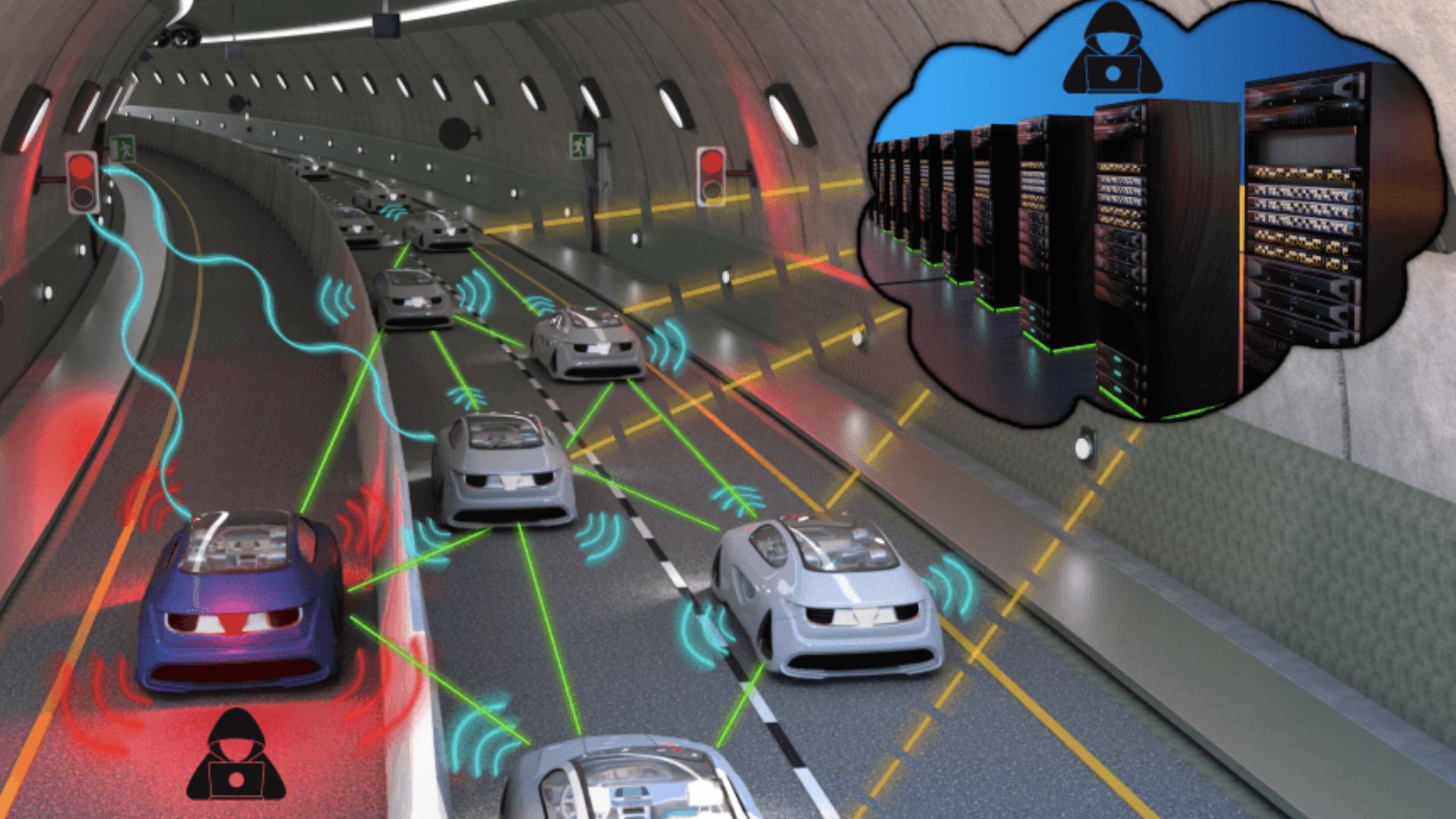

Harvard SEAS researchers introduced cy-trust, a quantitative trust metric for connected autonomous agents that ranges from 0–1 and weights incoming data before it influences decisions. The framework addresses risks unique to cyber-physical systems — for example, a compromised vehicle spoofing traffic data or a robot giving incorrect location information — by converting source reliability into a numeric influence factor. Published in the Proceedings of the IEEE and described on April 6, 2026, cy-trust shifts security from access control to real-time decision hygiene, letting each agent discount, ignore, or prioritize messages based on measured trustworthiness.

What happened

Harvard John A. Paulson School of Engineering and Applied Sciences researchers released a framework called “cy-trust” that assigns a numeric trust value (0–1) to information sources within networks of autonomous agents. The framework is intended to make fleets of self-driving cars, delivery robots, and other cyber-physical systems more resilient to faulty or malicious peers. The work was presented in the Proceedings of the IEEE and described publicly on April 6, 2026.

Technical context

Connected autonomous systems make decisions based on sensor fusion and inter-agent communications. Traditional cybersecurity largely focuses on access control, authentication, and preventing unauthorized commands; it does not directly manage how an agent filters or weights potentially unreliable external observations in real time. Cy-trust operationalizes trust as a scalar weight that determines how much influence a remote agent’s data has on a receiving agent’s control or planning pipeline.

Key details from sources

Cy-trust returns a numerical trust score between zero and one for each source of information; that score modulates the influence of incoming messages on an agent’s internal state. The team motivates the framework with concrete failure modes: a hacked vehicle broadcasting false traffic conditions that cause dangerous route changes in others, or a rescue robot providing incorrect locations that misdirect teammates. The authors emphasize resilience and learned lessons from internet-scale security: “Cyber-physical systems are going to become very pervasive,” said Gil, a co-author, adding that the question is how to secure and ensure real-world resilience for these systems.

Why practitioners should care

For ML engineers and robotics teams, cy-trust reframes a practical integration problem: how to combine model uncertainty, provenance, reputation, and observed behavior into a single decision-weighting parameter. Implementing such a metric can reduce cascade failures in multi-agent systems, improve robustness of decentralized perception stacks, and give a concrete signal for fallbacks and graceful degradation. It is relevant for architects building V2X systems, distributed SLAM teams, and mixed human-robot operations where one agent’s error can propagate.

What to watch

Look for follow-up work showing how cy-trust scores are computed (statistical, learning-based, reputation-based, or hybrid), how they integrate with existing sensor-fusion/estimation filters, and real-world demonstrations at scale. Evaluate how the framework interacts with adversarial models and whether it requires centralized attestation, secure hardware, or distributed reputation stores. Practical adoption will hinge on clear metrics for false-positive/false-negative trust assignment and low-latency computation suitable for embedded platforms.

Scoring Rationale

The framework is credible (Harvard + Proceedings of the IEEE) and highly relevant to autonomous systems engineering. It is moderately novel and broadly applicable across connected vehicles and robots, but practical actionability awaits algorithmic details and integration studies.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems