OpenAI Updates ChatGPT Default to GPT-5.5 Instant

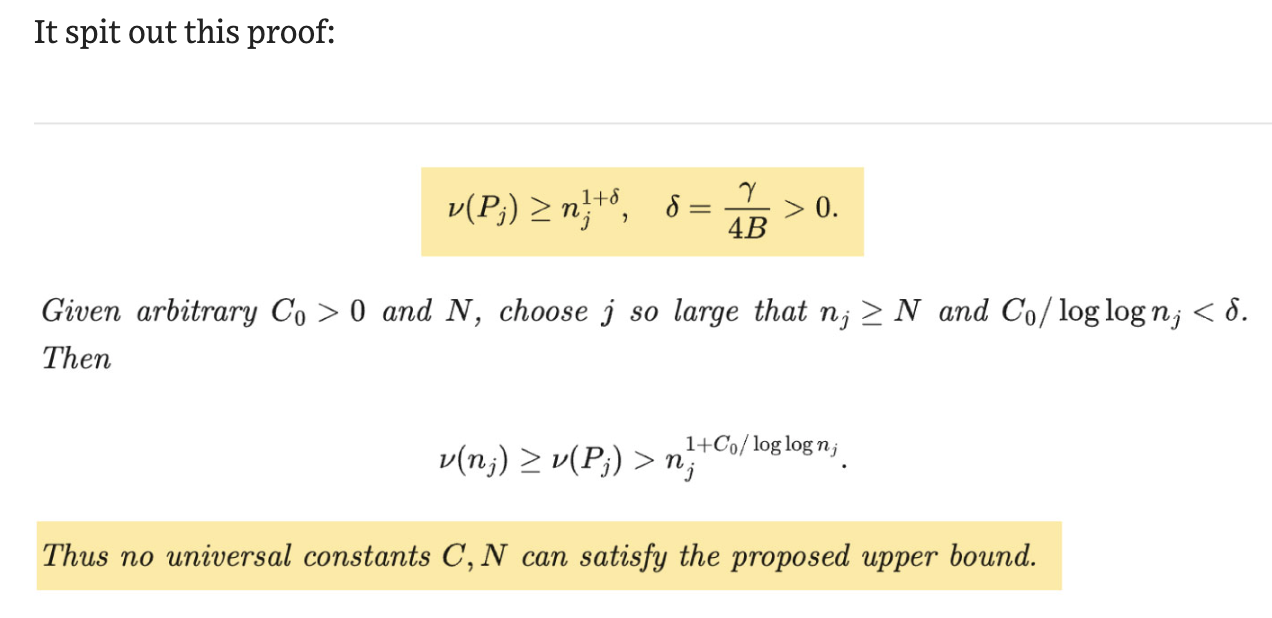

According to OpenAI's announcement, ChatGPT's default model is now GPT-5.5 Instant, a fast, low-latency model that the company reports is "smarter and more accurate." Per OpenAI, internal evaluations show 52.5% fewer hallucinated claims on high-stakes prompts versus GPT-5.3 Instant, and 37.3% fewer inaccurate claims on user-flagged hard conversations. Tech outlets report that GPT-5.5 Instant improves multimodal reasoning, image analysis, and STEM performance, TechCrunch cites an AIME 2025 score of 81.2 versus 65.4 for the older model. OpenAI is rolling the model out as the default for all ChatGPT users and is exposing it through the API as the chat-latest alias, while personalization features and memory-source surfacing are initially limited to paid tiers, with wider rollout planned, per OpenAI and reporting by MacRumors and TechCrunch.

What happened

According to OpenAI's blog post, ChatGPT's default model has been updated to GPT-5.5 Instant, replacing GPT-5.3 Instant for logged-in users. OpenAI wrote that the model delivers smarter, clearer, and more personalized responses and reported internal evaluation gains including 52.5% fewer hallucinated claims on high-stakes prompts and 37.3% fewer inaccurate claims on difficult, user-flagged conversations compared with GPT-5.3 Instant. TechCrunch reported benchmark improvements as well, citing an AIME 2025 math test score of 81.2 for the new model versus 65.4 for the predecessor and higher performance on the MMMU-Pro multimodal reasoning benchmark (76 vs. 69.2).

Technical details

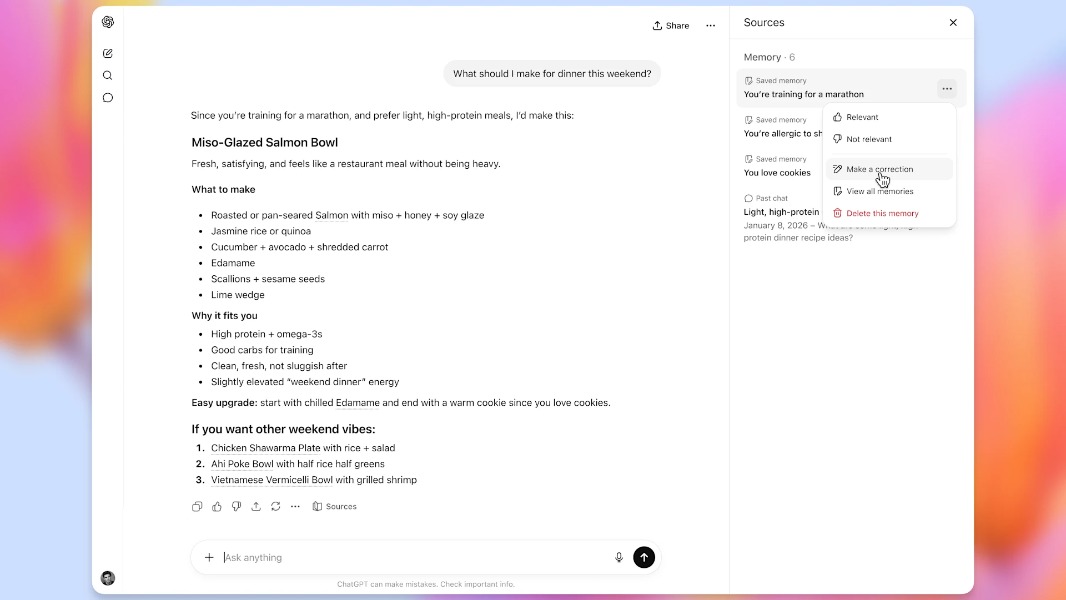

Per OpenAI documentation and the developer model page, GPT-5.5 supports text and image input, offers a large context window (documented on the API page), and is available via Chat Completions, Responses, Realtime, and other endpoints. OpenAI's help article explains that ChatGPT's model selector now routes between Instant, Thinking, and Pro variants; Instant may auto-switch to GPT-5.5 Thinking for harder tasks while preserving low-latency responses when appropriate. The company and deployment-safety materials describe strengthened safety training for long, high-stakes conversations and added transparency by surfacing memory sources that show which past chats, files, or Gmail items the model used to generate answers, according to OpenAI and reporting by MacRumors and TechCrunch.

Industry context

Editorial analysis: Companies that update a widely used default model usually aim to improve accuracy and reduce user friction; moving the default to a model with measured reductions in hallucination and better context handling lowers the friction for practitioners relying on off-the-shelf behavior. For developers, the chat-latest API alias and snapshot/versioning support (documented on OpenAI's developer pages) mean that applications can pick up the new behavior quickly, but teams wanting reproducible behavior can lock a specific snapshot.

Practical implications for practitioners

Editorial analysis: The combination of better factuality on high-stakes prompts and more robust multimodal reasoning suggests fewer manual correction loops in applications that use ChatGPT for knowledge work, legal or medical summarization, and STEM assistance. However, practitioners should note that the company documents different capability tiers (Instant, Thinking, Pro) and that personalization and memory-source features are gated initially by subscription tier, which affects access patterns during early rollout.

What to watch

- •Adoption indicators: whether major third-party integrations and enterprise deployments switch their chat-latest alias and how quickly they test for regressions.

- •Benchmark reproducibility: independent benchmark runs for AIME and MMMU-Pro by third parties to validate TechCrunch-cited results.

- •API pricing and token accounting: developer documentation notes input/output pricing uplifts for GPT-5.5, and practitioners should track cost/performance trade-offs for long-context use cases.

Reported sources

OpenAI's GPT-5.5 announcement and developer documentation, OpenAI help center pages, reporting from TechCrunch, MacRumors, and other outlets.

Scoring Rationale

This is a notable model upgrade with measurable reductions in hallucination and improved benchmarks that affect both end users and developers. It changes default behaviour for a widely used product and updates API defaults, making it important for practitioners to re-evaluate integrations and testing.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems