OpenAI launches GPT-5-class realtime voice models

OpenAI introduced three realtime audio models - GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper - for developers to orchestrate reasoning, translation, and transcription in live voice flows. Let's Data Science reports "GPT-Realtime-2, our first voice model with GPT-5-class reasoning." News outlets report differing context-window claims: Let's Data Science cites a 128k token window for GPT-Realtime-2, while CryptoBriefing reports a 256k token window. Pricing figures reported by Economic Times list $32 per million audio input tokens for GPT-Realtime-2, $0.034 per minute for GPT-Realtime-Translate, and $0.017 per minute for GPT-Realtime-Whisper. CryptoBriefing also reported a market reaction, saying Bitcoin climbed to $122K after the announcement. Customers testing the models include Zillow, Priceline, and Deutsche Telekom, according to Economic Times.

What happened

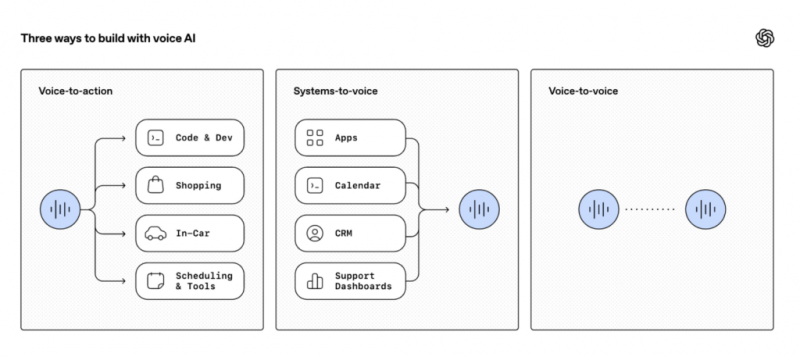

OpenAI added three realtime audio models to its developer API: GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper. According to Let's Data Science, OpenAI wrote "GPT-Realtime-2, our first voice model with GPT-5-class reasoning." Multiple outlets report the three models split conversational reasoning, translation, and transcription into discrete orchestration primitives available via OpenAI's Realtime API (Indian Express; VentureBeat; Let's Data Science). Economic Times reports customers testing the models include Zillow, Priceline, and Deutsche Telekom. Economic Times also published pricing: $32 per million audio input tokens for GPT-Realtime-2, $0.034 per minute for GPT-Realtime-Translate, and $0.017 per minute for GPT-Realtime-Whisper. CryptoBriefing reported a crypto-market reaction, stating Bitcoin rose to $122K and Ethereum to $4.3K following the announcement.

Technical details

Sources disagree on the advertised context window size for the reasoning model. Let's Data Science reports GPT-Realtime-2 supports a 128k token context window; CryptoBriefing reports a 256k token window. VentureBeat and Economic Times describe the launch as separating task types so teams can route transcription, translation, and reasoning to specialized models rather than a single monolithic voice stack. Economic Times frames GPT-Realtime-Translate as handling more than 70 input languages into 13 output languages, and GPT-Realtime-Whisper as a streaming speech-to-text model for live captions and notes.

Editorial analysis - technical context

Models that combine large context windows and live audio must manage latency, memory, and state-tracking tradeoffs. Teams building comparable systems typically balance end-to-end model latency against cascaded ASR-plus-NLP architectures; the modular approach reported here mirrors industry patterns where separation of concerns (ASR, translation, reasoning) reduces per-call compute and lets operators optimize cost and latency per primitive. Longer context windows permit multi-turn troubleshooting, meeting summarization, and retrieval-augmented dialogue without frequent session resets, but they also raise GPU memory and throughput requirements for realtime inference.

Context and significance

Industry reporting places the release in a broader trend of making agent primitives composable for production voice systems (VentureBeat; CryptoBriefing). For developer platforms, pricing granularity matters: token-based billing for reasoning models and per-minute billing for streaming tasks change cost modeling for applications that mix short commands with long-form conversations. CryptoBriefing's coverage ties the announcement to speculative market moves in AI-adjacent crypto sectors, reporting a one-day price jump in Bitcoin; that reaction reflects recurring market sensitivity to major AI infrastructure updates in 2025-2026 rather than a technical evaluation of voice-model performance.

What to watch

Observers should verify the final published technical specs from OpenAI for the context-window discrepancy noted across outlets. Monitor third-party latency and cost benchmarks as enterprises integrate these models into call-routing, interpretation, and agent-orchestration pipelines. Watch for independent evaluations of translation accuracy at speaker pace, transcription word-error-rate under noisy conditions, and how orchestration primitives interact with retrieval and tool-calling in live sessions. Finally, track enterprise customer case studies (for example, the firms Economic Times named) to see how real-world deployments balance cost, latency, and context retention.

For practitioners

Industry-pattern observations: teams adopting modular realtime primitives usually invest in lighter orchestration layers that handle task assignment, jitter buffering, and fallback ASR; expect integration work around audio framing, speaker diarization, and token-to-audio synchronization. Independent benchmarking and controlled A/B tests will be essential before replacing cascaded or bespoke stacks with these new managed primitives.

Scoring Rationale

This is a significant developer-facing product launch: GPT-5-class reasoning in realtime voice primitives materially lowers engineering friction for multi-turn voice agents. The story matters to practitioners integrating voice into agent stacks but is not a paradigm-shifting frontier release.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems