Northwestern and Fermilab Release NEXUS Dataset for NVIDIA Ising Benchmark

Northwestern University and Fermilab released a high-dimensional dataset from the Northwestern EXperimental Underground Site (NEXUS) to support the NVIDIA Ising AI benchmark and an accompanying vision-language agent, NVQCA. The month-long, 107-meter-deep measurement campaign captured the first reported correlated charge jumps across multiple qubits in a controlled underground environment. The dataset combines real NEXUS traces with synthetic examples to create a standardized benchmark evaluating models on six diagnostic tasks, enabling automated interpretation of 2D experimental plots and real-time identification of charge-jump and quasiparticle dynamics. Fermilab is providing centralized GPU infrastructure and a lab-wide model router so researchers can run the NVQCA agent through a single endpoint, with plans to extend the framework across additional testbeds and sensing programs.

What happened

Northwestern University and Fermilab released a high-dimensional dataset from the Northwestern EXperimental Underground Site (NEXUS) to serve as a primary test case for the NVIDIA Ising benchmark and the vision-language tuning agent NVQCA. The dataset was recorded during a month-long campaign at 107 meters depth and contains the first reported observations of correlated charge jumps across multiple qubits in a low-background underground environment. The collaboration pairs real experimental traces with synthetic data to produce a standardized benchmark for AI-driven quantum hardware diagnostics.

Technical details

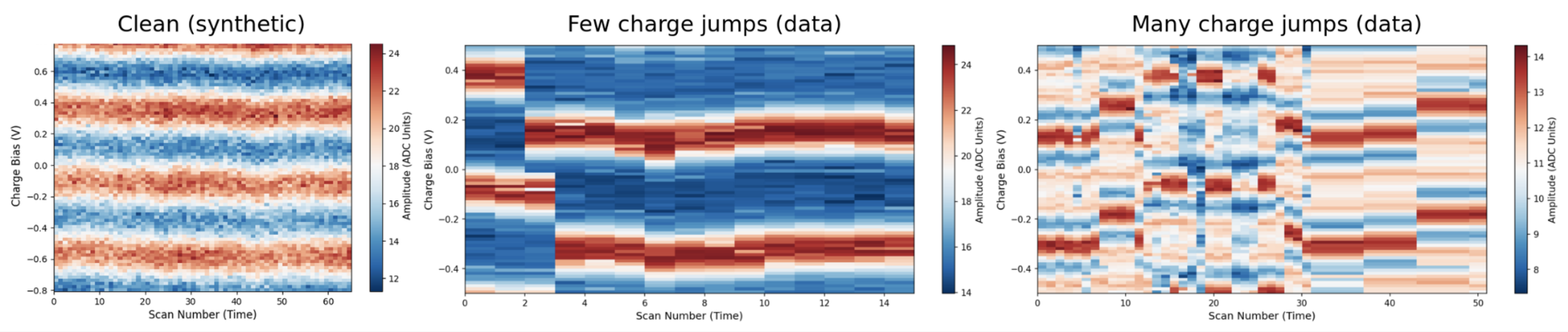

The dataset targets models that interpret 2D experimental plots such as sinusoidal interference fringes and sudden shifts caused by charge jumps. The benchmark evaluates models on six diagnostic tasks:

- •technical description

- •outcome classification

- •physical interpretation

- •fit quality assessment

- •parameter extraction

- •stability judgment

These tasks measure both visual pattern recognition and domain-specific inference, making the benchmark a vision-language evaluation rather than a pure numeric regression. The NVQCA agent is hosted on Fermilab's centralized GPU cluster and registered with a lab-wide model router, enabling researchers to submit experimental plots through a single endpoint and receive automated diagnostics in near real time. Combining real NEXUS data with synthetic cases improves task coverage and addresses class imbalance for rare events like multi-qubit correlated charge jumps.

Context and significance

This release formalizes a benchmark and dataset at the intersection of quantum hardware and AI-driven lab automation. Vision-language models that can read plots and explain hardware states are emerging as practical tools for calibration, drift detection, and failure diagnosis. The NEXUS dataset is notable because it provides real-world, low-noise measurements from an underground facility, reducing cosmic background that confounds surface experiments and revealing correlated noise modes that matter for superconducting qubits and error mitigation. Standardizing evaluation around the NVIDIA Ising benchmark and NVQCA creates comparability across model architectures, training regimes, and synthetic-data strategies.

Why practitioners should care

Automated plot interpretation shortens the calibration loop for quantum processors and reduces manual expert bottlenecks. The benchmark's multi-task structure forces models to combine visual feature extraction with physics-aware reasoning, a useful proxy for other experimental sciences. The availability of production-grade GPU hosting and a model router demonstrates a deployment path from research benchmark to lab-integrated tooling.

What to watch

Expect the dataset and benchmark to expand as the collaboration integrates additional testbeds under CosmiQ and LOUD programs and as groups contribute further synthetic scenarios. Key follow-ups will include open comparative results from alternative VLMs, detailed benchmark metrics on failure modes for correlated charge events, and research on domain adaptation for models trained on mixed synthetic and real experimental data.

Scoring Rationale

The dataset and benchmark are a notable, domain-specific advance: they provide rare, low-background quantum hardware data and a structured VLM evaluation. The scope is specialized to quantum lab automation, so the impact is significant for researchers in quantum hardware and AI-for-science but less disruptive across the broader ML industry.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems