Meta signs multibillion-dollar Graviton deal with AWS

Meta Platforms has signed a multiyear, multibillion-dollar agreement to deploy tens of millions of AWS Graviton cores, according to Reuters, the Wall Street Journal, and company blog posts from Meta and AWS. The deployment begins with "tens of millions of Graviton cores" with flexibility to expand, per Meta's post and AWS statements, and AWS engineer Nafea Bshara told Reuters the agreement spans multiple years and is worth billions. The deal centers on Graviton5, AWS's 192-core, 3-nanometer CPU that AWS and Meta describe as optimized for CPU-intensive "agentic AI" workloads; AWS highlights a fivefold larger L3 cache versus the prior generation. Industry reporting frames the move as part of Meta's broader infrastructure diversification alongside prior deals with GPU vendors and other cloud partners.

What happened

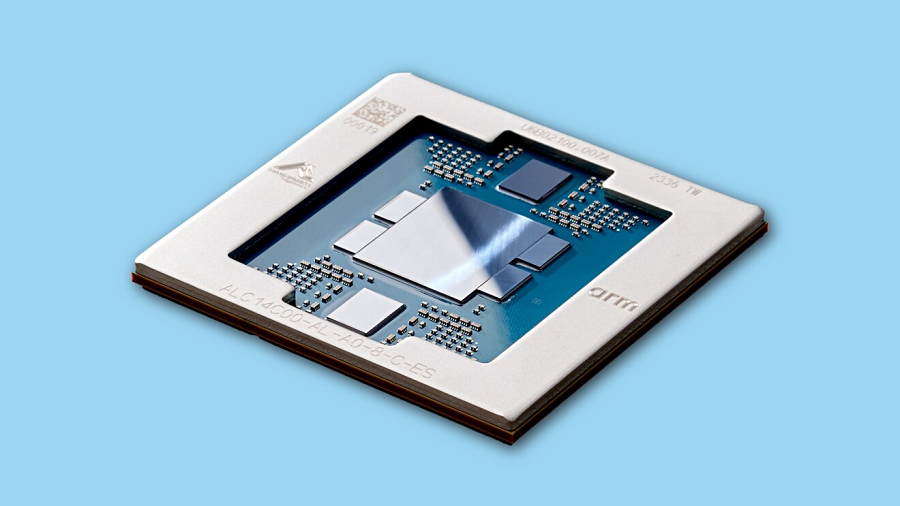

According to Reuters and the Wall Street Journal, Meta Platforms and Amazon Web Services have agreed a multiyear, multibillion-dollar deal under which Meta will deploy "tens of millions" of AWS Graviton cores, with the option to expand that deployment (Reuters; WSJ; Meta and AWS blog posts). Per AWS and Meta announcements, the chips at the center of the agreement are the Graviton5 cores, each Graviton5 die featuring 192 cores manufactured on a 3-nanometer process (AWS; SiliconANGLE; Meta blog). Nafea Bshara, vice president and distinguished engineer at AWS, told Reuters the contract spans multiple years and is worth billions of dollars. Meta's public post and Reuters attribution state the deployment will support agentic AI workloads and broader AI infrastructure needs (Meta blog; Reuters).

Technical details

Per AWS and reporting, Graviton5 implements the Arm instruction set architecture and includes a Nitro System integration; AWS materials cite a 5x larger L3 cache versus the prior Graviton generation and claim roughly 25% single-socket performance improvement over the previous Graviton, figures published by AWS and repeated in trade coverage (AWS; SiliconANGLE). The announcements position Graviton5 as targeting CPU-intensive tasks in production AI stacks: coordinating GPU-backed model inference, powering tools used by agentic systems, and handling high-concurrency, real-time workloads that do not require raw GPU matrix throughput (Meta blog; AboutAmazon; Reuters).

Industry context

Editorial analysis: Companies building large-scale AI infrastructure are increasingly combining specialized GPU fleets for training with purpose-built CPU capacity for post-training workloads and orchestration. Public reporting notes Meta's deal follows other large chip and cloud commitments this year and complements existing agreements with GPU vendors and other cloud providers (WSJ; Reuters). The broader CPU market has seen renewed demand as AI workloads diversify from pure training to latency-sensitive, agentic services that rely on many concurrent CPU threads.

Context and significance

Editorial analysis: For AI practitioners, the deal underlines two trends. First, production AI at massive scale requires heterogeneous compute, not just GPUs. Second, cloud providers and hyperscalers are packaging vertically integrated silicon and systems (CPU designs plus Nitro-style accelerators and isolation engines) to improve price-performance and operational density. The public statements from AWS emphasize cost-efficiency and energy performance, while Meta's blog frames the move as part of a diversified compute portfolio.

What to watch

Editorial analysis: Observers should track three indicators:

- •how many Graviton cores become available regionally, since WSJ reports most will be located in the U.S.

- •whether Meta routes specific production services or agentic workloads onto Graviton-backed clusters versus GPU-backed clusters

- •pricing and contractual terms as they influence enterprise cloud compute procurement. Market signals such as pricing changes, performance benchmarks on common inference workloads, and subsequent announcements from other large AI users will clarify whether this deal is an outlier or a broader industry shift

Bottom line for practitioners

Editorial analysis: The public reporting of a large-scale Graviton deployment highlights that CPUs remain strategically important in end-to-end AI systems. Teams responsible for production inference, system orchestration, and agent workflows should follow architecture guidance and cost-performance data for Arm-based, high-core-count CPU nodes as an alternative or complement to GPU-centric deployments.

Scoring Rationale

This is a notable infrastructure deal between two hyperscalers that validates high-volume, CPU-centric compute for production AI services. It matters to practitioners planning heterogeneous deployments and procurement, though it is not a frontier-model release.

Practice with real Ad Tech data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Ad Tech problems