Log2Motion Reveals Hidden Muscle Strain During Smartphone Use

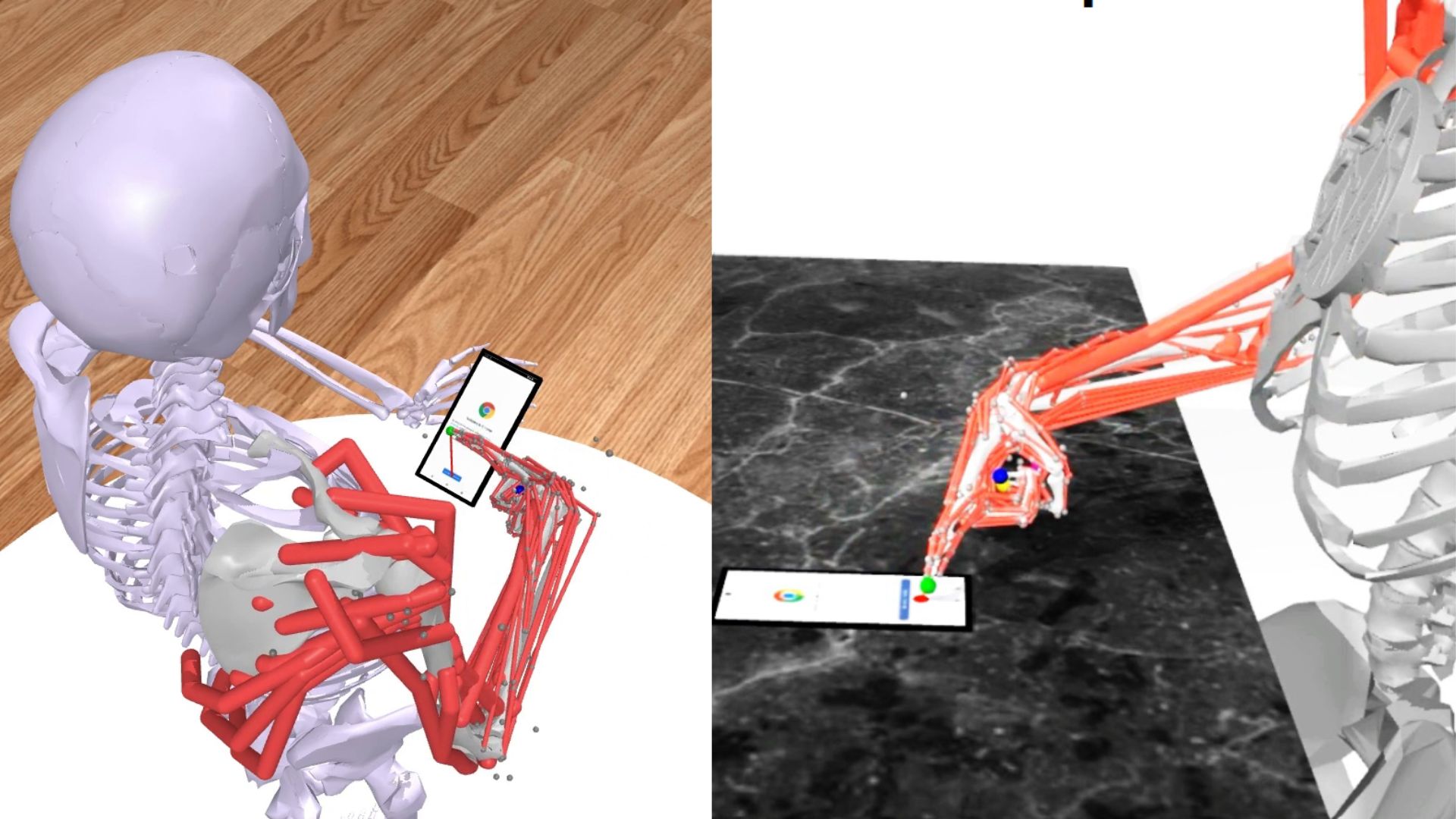

Researchers at Aalto University and Leipzig University developed `Log2Motion`, an AI-driven simulator that converts raw touch logs into full-body motion and muscle-effort estimates. The system uses a musculoskeletal model, reinforcement learning, a physics engine, and a software emulator to replay realistic finger and hand motions while interacting with real mobile apps in real time. Validation with motion-capture data shows the tool can estimate muscle activity, movement accuracy, and energy use, revealing that certain gestures, small icons, and corner interactions impose higher physical effort. Log2Motion offers a practical new lens for UX designers and ergonomics researchers to quantify physical fatigue from everyday smartphone use and to iterate UI layouts and interaction patterns with physiological cost in mind.

What happened

Researchers from Aalto University and Leipzig University released `Log2Motion`, an AI-driven pipeline that transforms simple smartphone touch logs into detailed motion simulations that estimate muscle activity, movement precision, and energy use during interaction. The system couples a digital musculoskeletal model with reinforcement learning and a real-time software emulator in a physics engine to recreate realistic finger, hand, and arm movements while interacting with actual mobile apps. Validation against motion-capture data confirms the simulator highlights which gestures and screen regions require more physical effort.

Technical details

The core stack combines a biomechanical model and learned control policies to map low-dimensional touch events to high-dimensional motion trajectories. Key technical elements include:

- •`musculoskeletal model` representing bones, joints, and muscles, used for inverse dynamics and effort estimation

- •reinforcement learning policies trained to produce realistic trajectories matching touch targets and timing

- •a software emulator integrated with a physics engine to replay interactions in real time against real app layouts

- •validation using motion-capture recordings from human participants to calibrate and evaluate accuracy

Context and significance

Designers and UX researchers typically rely on tap coordinates, gesture frequency, and subjective reports. `Log2Motion` fills a persistent blind spot by quantifying the physiological cost of interactions, enabling ergonomics-aware UI decisions. This matters for mobile-first product teams, accessibility engineers, and wearable-device designers who need objective measures of user fatigue. The method also bridges HCI and biomechanical modeling, offering a reproducible way to generate synthetic motion data when in-person studies are impractical.

What to watch

Adoption depends on toolchain integration and privacy constraints around touch telemetry. Look for follow-on work on cross-device generalization, per-user calibration, and developer-facing APIs that embed effort metrics into A/B testing and automated UI linting.

Scoring Rationale

This is a notable technical advance linking touch telemetry to biomechanical cost, useful for HCI researchers and product teams. It is not a frontier AI breakthrough, but it provides practical tooling for ergonomics and UX, earning a mid-high score.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems