High-Density AI Forces Rack-Level Power Reckoning

AI-driven compute is pushing rack power well beyond legacy assumptions, exposing nontrivial losses from repeated AC-to-DC conversions. Modern servers, GPUs, and storage all run on direct current, but most data centers still distribute high-voltage AC. At the rack, multiple conversion stages - building AC to UPS, UPS to PDU, PDU to server power supplies and VRMs - multiply inefficiencies and waste energy as heat. The result is higher operating costs, cooling burdens, and practical limits on achievable density. Operators are evaluating alternatives including bulk rectification to 400V DC buses, higher-voltage DC distribution, and cooling architectures such as immersion to break the thermal bottleneck. These choices require tradeoffs in safety, standards, capital expense, and operations, and will shape how hyperscalers and enterprises design next-generation AI clusters.

What happened

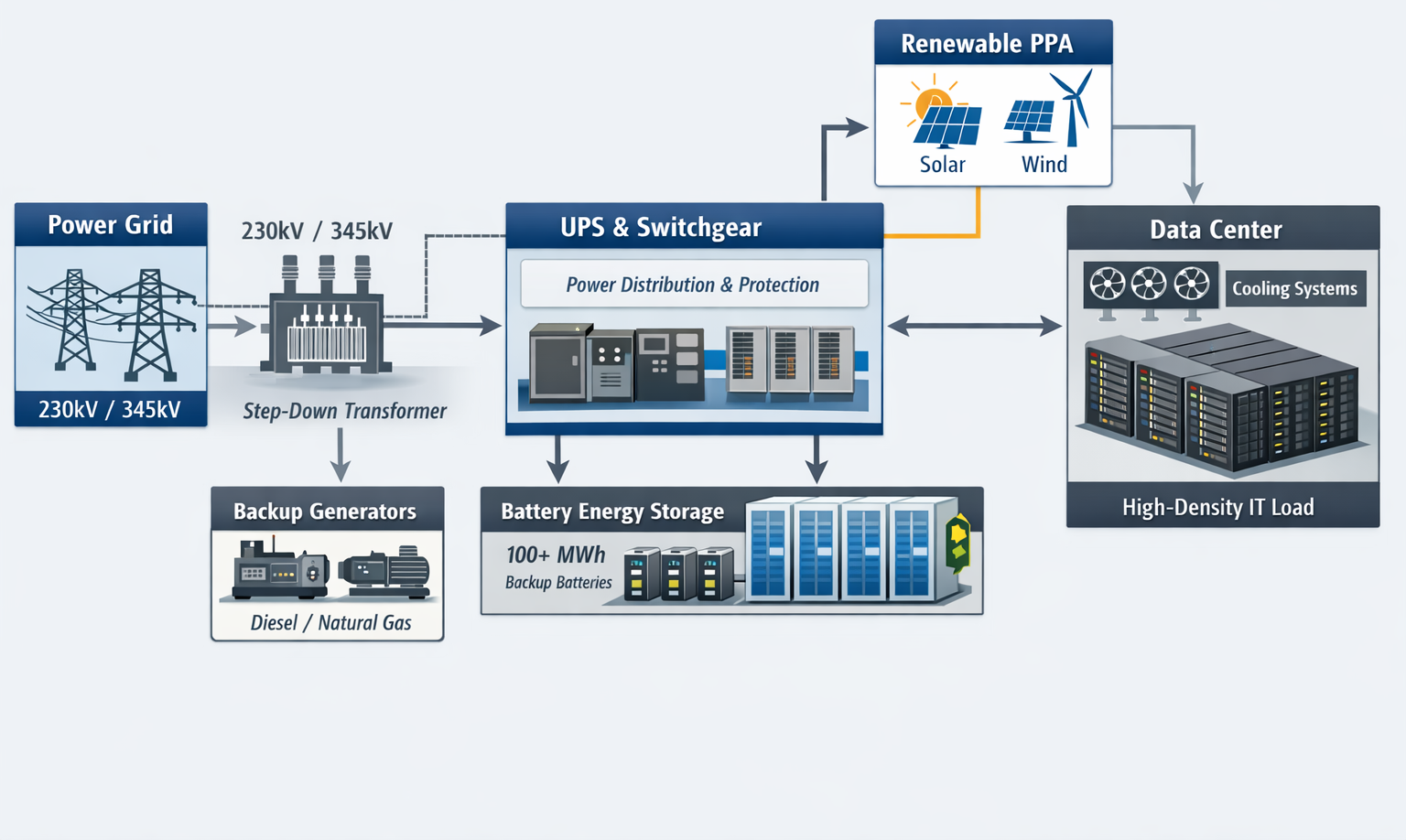

The data center industry is confronting a rack-level power crisis driven by AI, the problem is conversion loss and thermal management as rack power densities reach new highs. POWER magazine highlights that every component that matters for AI workloads, including GPUs and CPUs, requires direct-current power, yet facilities still send high-voltage alternating current to racks. Multiple AC-to-DC conversions inside the power chain stop being negligible when racks consume tens of kilowatts, increasing waste heat and operational cost.

Technical details

The core inefficiency comes from stacked conversion stages, and the math scales with density. Conversions occur at the building feed, UPS, PDU, server power supplies, and voltage-regulator modules. Operators report practical rack densities from 30 kW to 100 kW and higher on AI clusters, which magnify losses and cooling load. Practical mitigation paths under active evaluation include:

- •Bulk rectification to a facility DC bus, for example 400V DC, to remove repeated AC-to-DC stages

- •Higher-voltage DC distribution to reduce I2R losses in cabling and connectors

- •Power-supply architecture changes, including direct-to-rack rectifiers and distributed power electronics

- •Cooling shifts, especially rear-door heat exchangers and liquid or immersion cooling, to remove the thermal constraint limiting further power increases

Context and significance

This is not a niche operational annoyance, it is an architectural inflection point. Hyperscalers already pilot DC-distribution and immersion designs to squeeze efficiency and density gains. For enterprises and colocation operators the decision ties into capital planning: upgrading transformers, switchgear, and floor PDUs is costly, and standards, safety rules, and interconnection agreements lag behind practice. Reduced conversion loss translates directly into lower PUE and operating expense, which matters when AI clusters run 24/7 and power is a primary cost driver.

What to watch

Expect pilots from hyperscalers to set practical baselines, vendor ecosystems to ship rack-level rectifiers and busbar solutions, and a push on standards and safety guidance. The tradeoffs are clear: lower operating cost and higher density versus higher up-front engineering, compliance work, and new operational practices.

Scoring Rationale

This is a notable infrastructure story with practical implications for data center operators and ML deployments. It signals an architectural shift with measurable OPEX benefits, but it is not yet a universal standard, so it rates as important but not industry-shaking.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems