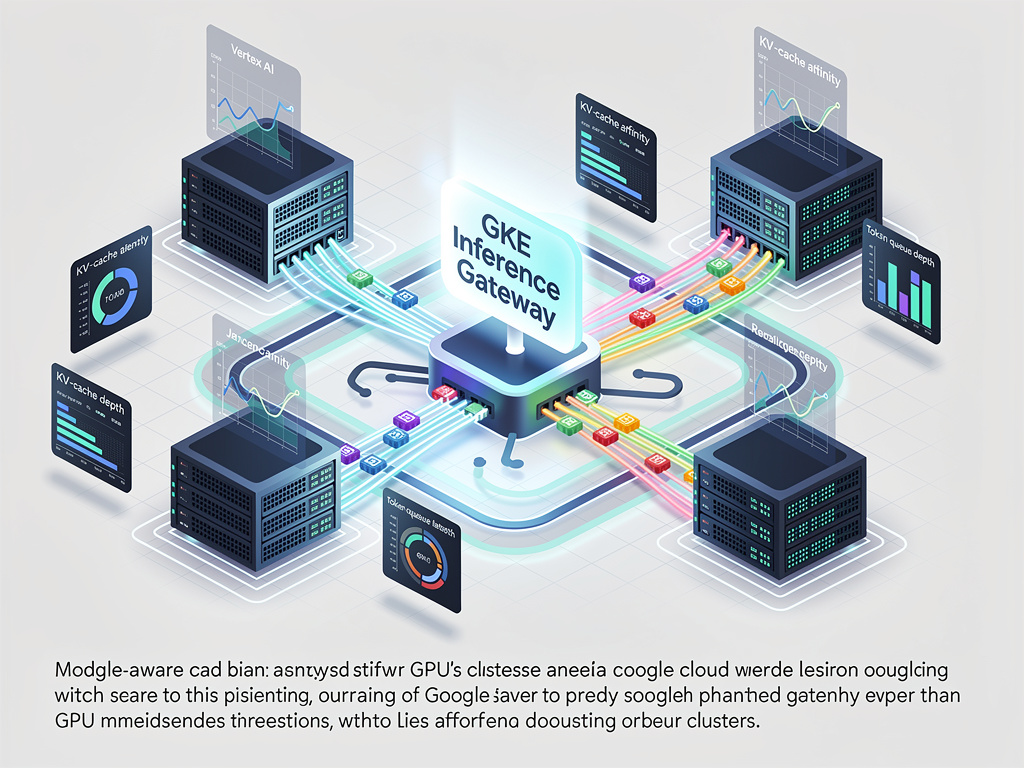

Google Cloud Optimizes Vertex AI Inference Routing

Google Cloud recently integrated a model-aware GKE Inference Gateway into Vertex AI’s serving stack to optimize LLM inference routing. The gateway inspects request cost and backend metrics to reduce head-of-line blocking, lowering P95/P99 latency and improving GPU utilization across thousands of accelerators. These improvements yield better latency for real-time applications and lower per-query infrastructure costs, supporting broader deployment across Vertex AI’s production serving fleet.

Scoring Rationale

High industry relevance and practical engineering detail, limited by incremental novelty relative to existing inference-optimization efforts.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems