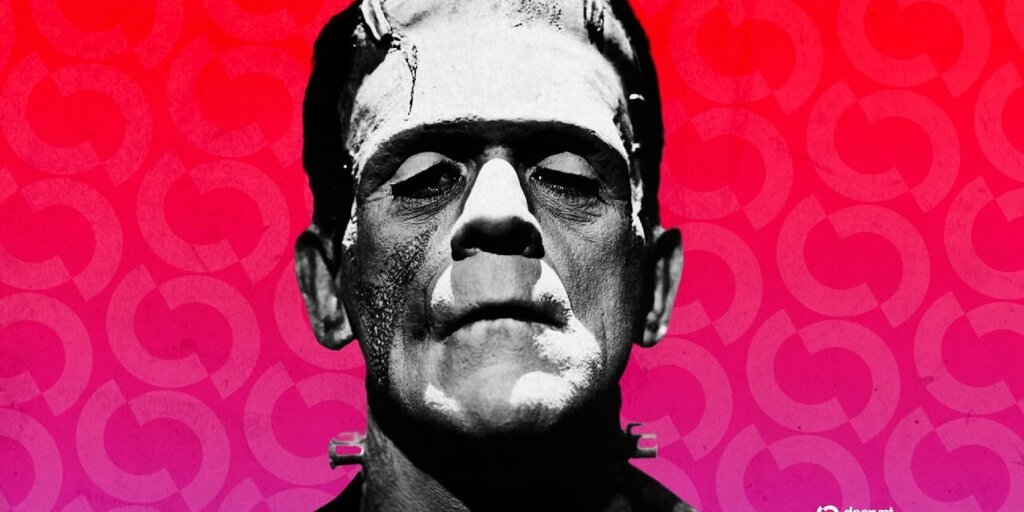

Engineer Merges Claude Opus, GLM and Qwen Into Superior Model

An AI infrastructure engineer, Kyle Hessling, created an 18 billion parameter frankenmerge by stacking two community finetunes derived from Qwen 3.5: the Opus-distilled Qwopus 3.5-9B-v3.5 on layers 0-31 and the GLM-distilled Qwen 3.5-9B-GLM5.1-Distill-v1 on layers 32-63. Using a passthrough layer-stack technique and a heal fine-tune via a QLoRA-style intervention, the merged 64-layer model passes 40 out of 44 capability tests, runs in Q4_K_M quantization at 9.2 GB of VRAM, and reportedly outperforms Alibaba's Qwen 3.6-35B-A3B on those benchmarks. The work required a custom merge script to handle Qwen 3.5s hybrid attention, and the final model can run on commodity GPUs such as an NVIDIA RTX 3060, making high-end reasoning performance more accessible to practitioners.

What happened

Kyle Hessling built a community frankenmerge that stacks finetune layers from two Qwen-derived models to produce an 18 billion parameter model that outperforms larger models on capability tests.

Technical details

Hessling took layers 0-31 from Qwopus 3.5-9B-v3.5, which distills Claude Opus reasoning into a Qwen base, and layers 32-63 from Qwen 3.5-9B-GLM5.1-Distill-v1, trained with supervision from GLM-5.1. The direct passthrough merge needed a custom script to deal with Qwen 3.5's hybrid linear/full attention architecture, and a final heal fine-tune using a QLoRA-style approach fixed output corruption and improved robustness.

Results

The result hits 40 out of 44 capability tests, runs in Q4_K_M quantization at 9.2 GB VRAM, and beats Qwen 3.6-35B-A3B in those evaluations while fitting on consumer GPUs like an NVIDIA RTX 3060.

Scoring Rationale

This is a notable community engineering result that demonstrates a practical technique to combine finetuned behaviors and compress high reasoning capability into a smaller, GPU-friendly model. It is not a new foundation model release but it has useful implications for practitioners and open-source tooling.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems