Data Annotation Services Accelerate AI Model Development

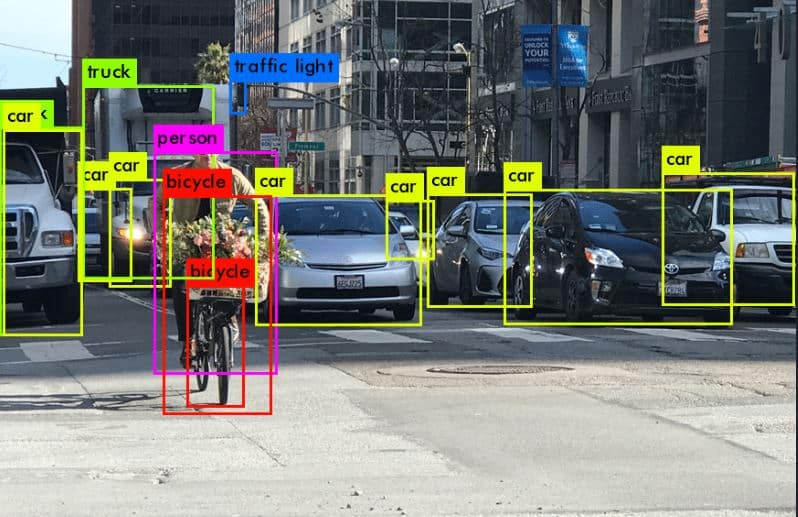

High-quality labeled data is the most common bottleneck in practical AI projects. Data annotation services remove that bottleneck by providing scalable, expert labeling, reducing rework and time-to-iteration for enterprise ML teams. Common failure modes include incorrect labels, slow manual workflows, and poor quality control that hide true model problems and force repeated dataset revisions. Effective annotation programs combine tooling, clear schema design, quality assurance, and human review to deliver reliable training datasets for vision, NLP, audio, and multimodal tasks. For practitioners, the takeaway is operational: invest in annotation design, data QA pipelines, and vendor selection early to preserve momentum and maximize model accuracy and deployment speed.

What happened

Data annotation services are presented as the practical lever that removes the primary bottleneck in enterprise AI, enabling teams to stop waiting for labeled data and start iterating models faster. The piece highlights research from 2025 showing better model accuracy when training datasets are high quality and outlines common annotation pitfalls that slow innovation.

Technical details

Incorrect labels, manual throughput limits, and poor QA are the core causes of slowdown. Annotation programs that scale adopt three technical pillars: robust labeling schemas, automated and statistical quality checks, and clearly orchestrated human review. Key capabilities that matter to practitioners include:

- •annotation schema versioning and clear documentation to avoid ambiguous labels

- •automated consensus and gold-standard sampling to measure labeler accuracy

- •tooling for batch labeling, accelerated workflows, and integration with model training pipelines

- •role-based workflows for labelers, reviewers, and subject-matter experts to reduce rework

Context and significance

High-quality labeled data remains an undervalued infrastructure component. While models and compute get most attention, annotated datasets determine real-world performance and fairness. Outsourcing or partnering with specialized vendors can be a force multiplier when internal teams lack scale or labeling expertise. Good annotation practices also reduce label noise, which directly improves sample efficiency and reduces the compute and data required to reach target performance.

What to watch

Prioritize time-boxed annotation pilots with clear QA metrics and fast feedback loops into model training. Evaluate vendors on QA methodology, labeler training, schema governance, and tooling integration. Expect growing specialization in vendors supporting domain-specific labels (medical, geospatial, legal) and tighter integration between annotation platforms and MLOps toolchains.

Scoring Rationale

Annotation services are a practical, widely applicable enabling technology for ML teams; they materially affect project velocity and model quality but are not a frontier research breakthrough. The story is notable for practitioners and directly impacts engineering practices.

Practice with real Hotels & Lodging data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Hotels & Lodging problems