Cubans Use AI to Imagine US Intervention

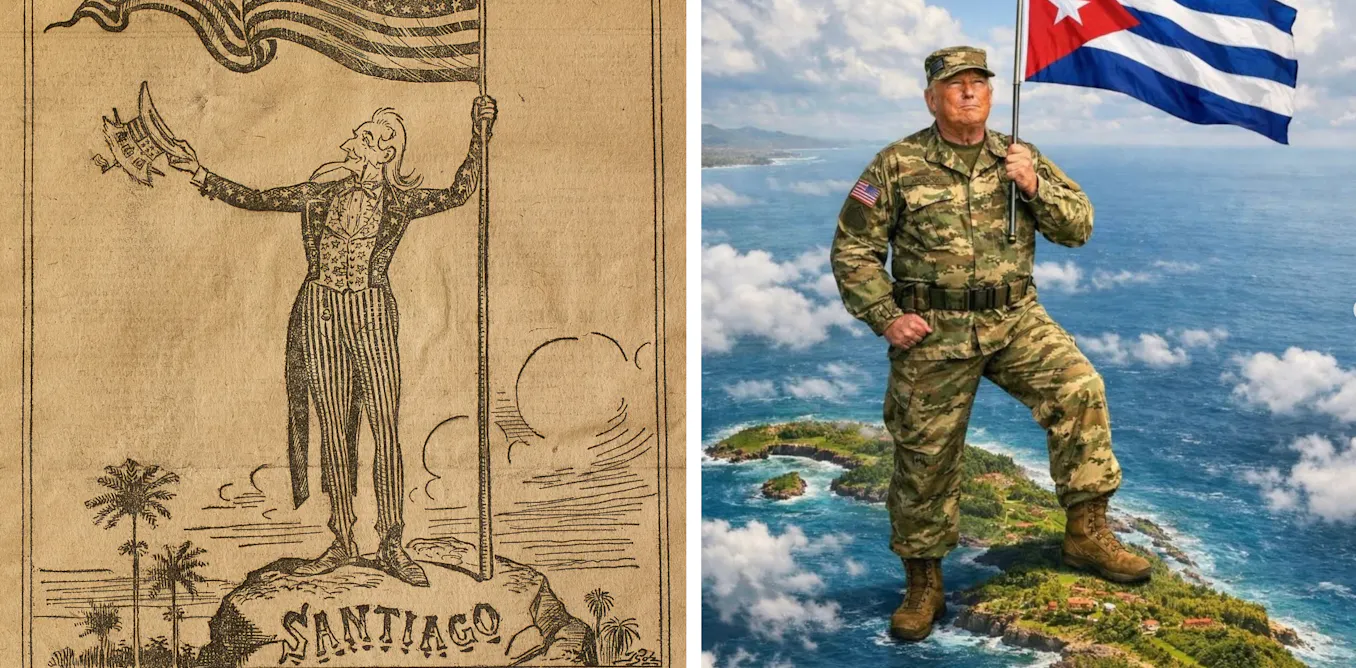

According to reporting in The Conversation, Cuban social media and WhatsApp groups have circulated AI-generated images and short clips that imagine a U.S. military intervention in Cuba. The Conversation documents examples produced with tools such as Midjourney, DALL-E, Runway and ChatGPT, depicting the island as a captive figure freed by an American protector or cityscapes transformed with portraits and statues replacing revolutionary iconography. The piece links these visual fantasies to broader political discussions following recent regional events and public remarks that have fueled speculation about U.S. actions. The Conversation frames the imagery as cultural and symbolic responses to political desperation rather than as operational plans, and notes the historical resonance of the visuals with late 19th-century interventionist cartoons.

What happened

According to The Conversation, Cuban social media feeds and WhatsApp groups have been filled with AI-generated images and clips imagining U.S. intervention in Cuba. The Conversation documents items created with tools including Midjourney, DALL-E, Runway and `ChatGPT`, showing motifs such as the island personified as a captive or child and Americans portrayed as rescuers, and scenes where revolutionary symbols are replaced by portraits and statues of U.S. figures. The Conversation also compares some AI images to political cartoons from the 1890s, arguing a visual continuity with earlier interventionist imagery. The reporting places these creations in the context of heightened regional tensions and public remarks that have circulated since early 2026.

Technical details / Editorial analysis - technical context

Editorial analysis: The tools cited, Midjourney, DALL-E, Runway and `ChatGPT`, lower the technical barrier to producing photorealistic or stylized political imagery. Industry-pattern observations note that accessible image generators let nonexperts rapidly iterate symbolic compositions, combine historic visual tropes with contemporary faces, and produce shareable formats optimized for platforms like WhatsApp and Twitter. Those dynamics accelerate the lifecycle of political memes and make provenance and intent harder to assess at scale.

Context and significance

Editorial analysis: Visual political narratives matter because images carry high emotional salience and spread rapidly in closed messaging networks. Comparable episodes in other countries have shown that AI-enabled imagery can amplify existing hopes, fears and misinformation, complicating verification for journalists and civil-society monitors. For practitioners, this underscores persistent content-moderation and detection challenges: stylistic diversity in AI outputs and private-group sharing reduce the effectiveness of platform-level interventions alone.

What to watch

Editorial analysis: Observers should track how provenance signals (metadata, watermarking, or platform labels) are treated in private-messaging contexts, whether local fact-checkers adapt workflows to short-form AI imagery, and whether researchers publish pattern-based detectors for stylistic families that recur in political prompts. The Conversation has not documented concrete operational steps tied to these images; the reporting frames them as cultural expressions circulating amid political uncertainty.

Scoring Rationale

The story is notable for practitioners because it shows how mainstream image-generative tools are shaping political narratives and moderation challenges. It is not a technical breakthrough, but it has meaningful implications for content moderation, provenance, and detection work.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems