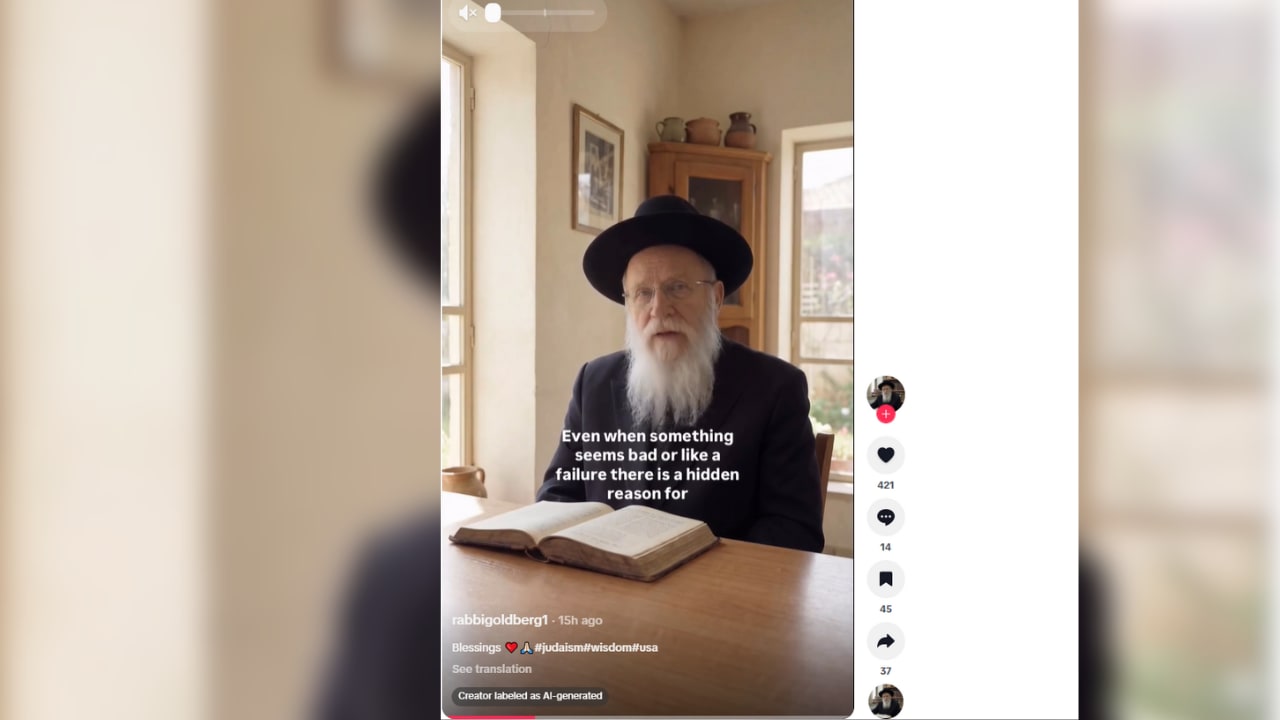

CAM finds fake AI rabbis spreading antisemitic content on TikTok

According to a report from the Antisemitism Research Center (ARC) at the Combat Antisemitism Movement (CAM), researchers identified 49 TikTok accounts posing as Jewish religious figures and spreading antisemitic tropes, collectively amassing more than 950,000 followers and over 10,000,000 likes (CAM report; coverage in JPost, Israel National News). The ARC analysis examined four accounts by handle, including @rabbirothstein and @rabbi_silverstein, and found repeated narrative structures and coordinated amplification patterns (CAM report). The report includes the warning, "The danger is clear," and urges platform interventions including AI detection and public awareness measures (CAM report). Editorial analysis: Industry observers note that AI-enabled persona fabrication combined with recommendation algorithms raises detection and mitigation challenges on platforms that serve young, impressionable audiences.

What happened

According to a report published by the Antisemitism Research Center (ARC) at the Combat Antisemitism Movement (CAM), researchers identified 49 TikTok accounts that presented themselves as Jewish religious figures while disseminating conspiracy theories and classic antisemitic narratives (CAM report; CAM press materials reported in JPost, IsraelNationalNews, Israel.com). The ARC states these accounts have collectively accumulated more than 950,000 followers and generated over 10,000,000 likes, metrics the report uses to underscore the network's reach (CAM report; coverage in IsraelNationalNews). The ARC conducted an in-depth content analysis of four accounts, including handles such as @rabbirothstein, @rabingoldmaan, @rabbistirberg, and @rabbi_silverstein, and reported consistent messaging patterns, repeated narrative arcs, and synchronized amplification tactics across those profiles (CAM report; CAM press materials).

The CAM report quotes its key finding directly: "The danger is clear. By masquerading as authentic Jewish voices, these 'rabbis' erode trust, normalize hatred, and incite real-world violence targeting Jews." The report also documents earlier work in which ARC and CAM identified more than 70 similar AI-generated 'rabbi' accounts on Instagram and says that, after outreach, Meta removed more than 60 of those Instagram accounts (CAM report; CAM website coverage).

Editorial analysis - technical context

AI-enabled persona synthesis, meaning the use of generated avatars and fabricated identities, lowers the barrier to producing convincing authority figures at scale. Industry-pattern observations note that combining synthetic avatars with short-form video formats and recommendation algorithms increases the probability that fabricated authority signals will be surfaced to users without immediate vetting. For practitioners, this creates two technical pain points: reliable detection of synthetic identity signals in multimodal content, and signal-to-noise challenges when distinguishing coordinated influence operations from organic parody or satire.

Context and significance

Disinformation researchers and platform-safety teams have documented similar abuse patterns where fabricated insiders are used to launder fringe narratives into broader discourse. The CAM report highlights a specific case study, religious impersonation framed as 'insider truth', which is notable because it recasts historically external antisemitic tropes as purported intra-community commentary, a tactic CAM argues increases plausibility for mainstream audiences (CAM report; coverage in Israel.com). For moderation and safety teams, this pattern matters because it blends identity-based impersonation with the same engagement mechanics platforms use to reward relatability and authority.

What the report recommends (reported claims)

Per the CAM report and CAM's public materials, researchers call on TikTok to invest in AI detection tools tailored to synthetic religious impersonation, to implement policies that reduce algorithmic amplification of such accounts, and to launch public-awareness campaigns teaching users how to spot AI-generated propaganda (CAM report; CAM press release). CAM's materials also reference Meta's prior removals on Instagram after CAM outreach as an example of platform response that source-identified actors say reduced reach on that platform (CAM report).

What to watch

Observers should monitor whether TikTok issues an official, public response to the ARC report and whether it publishes transparency data about impersonation removals or enhancements to synthetic media detection. Practitioners building moderation tooling should track disclosure from platforms about signal access for third-party researchers and whether detection models or labeling standards for synthetic personas are adopted. Finally, researchers and safety teams will watch whether similar persona-based influence campaigns appear on other recommendation-first short-video platforms, and whether platform rate limits or verification mechanics evolve to address fabricated authority figures.

Limitations and sourcing

The factual claims above are drawn from the CAM Antisemitism Research Center report and contemporaneous news coverage in JPost, IsraelNationalNews, and Israel.com; where CAM reports specific counts or quotes, those items are attributed to the CAM report. CAM's recommendations are reported as recommendations made by CAM; this summary does not assert platform intent or internal policies unless documented publicly by the platform.

Scoring Rationale

The story documents a notable, platform-scale disinformation pattern that affects content moderation, safety tooling, and disinformation research. It is not a frontier technical breakthrough, but it is important for practitioners working on synthetic-media detection and platform safety.

Practice with real Ad Tech data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Ad Tech problems