AI Data Center Boom Tightens Memory Chip Supply

The Conversation's Vidya Mani reports that AI data center construction is reallocating chip supply toward high-performance processors and high-bandwidth memory, creating shortages for consumer-device components that prioritize energy efficiency and integration. Reuters and CNBC report that Samsung Electronics posted record quarterly profits driven by memory-chip sales, with Reuters quoting Samsung executive Kim Jaejune saying, "Our supply falls far short of customer demand." Bloomberg and CBS News describe widening shortages in DRAM and high-bandwidth memory that are lifting prices for laptops, smartphones, and other electronics, with Oxford Economics cited by CBS warning of rising computer costs. Reporting also notes chipmakers are signing multi-year contracts to secure capacity, Reuters adds that identities and terms were not disclosed. Editorial analysis: Industry observers should view this as a supply-allocation shock driven by the distinct technical demands of AI servers versus mobile devices.

What happened

The Conversation's Vidya Mani explains that a global buildout of AI data centers is reallocating chip production and memory capacity toward components optimized for maximum throughput rather than energy efficiency, creating supply pressure on consumer-electronics parts. Reuters and CNBC report that Samsung Electronics posted record quarterly profits driven largely by its memory business, with Reuters reporting Q1 operating profit of 57.2 trillion won and noting that the chip division accounted for a substantial portion of that figure. Reuters quotes Kim Jaejune, a Samsung memory business executive: "Our supply falls far short of customer demand." Bloomberg and CBS News report widening shortages in DRAM and high-bandwidth memory (HBM) that are pushing up prices for personal computers and smartphones. CBS cites Oxford Economics analysis showing computer prices rising for the first time since the early 1980s, and Bloomberg documents broader industry warnings about a growing memory crunch.

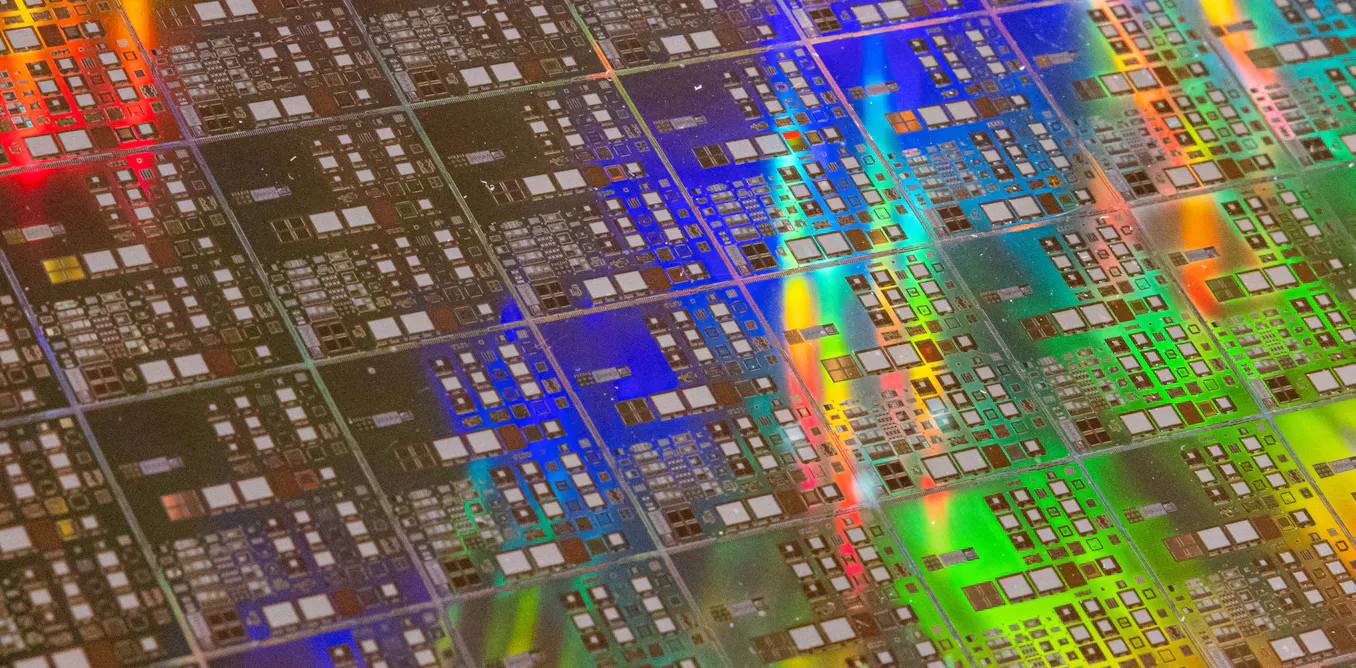

Technical details

The Conversation distinguishes the technical profiles that explain the allocation shift: consumer devices typically depend on systems-on-a-chip with tight power and thermal constraints plus DRAM and NAND for storage, whereas AI servers use GPUs or other accelerator processors paired with high-bandwidth memory (HBM) to maximize compute and memory bandwidth. Reporting in Reuters and Bloomberg notes chipmakers have retooled capacity and prioritized production lines for HBM and advanced memory used in AI accelerators.

Industry context

Editorial analysis: Companies in the memory supply chain operate with long lead times and concentrated capital investment, which makes rapid reallocation difficult. Public coverage from Reuters and Bloomberg shows manufacturers are signing multi-year binding contracts to lock in supplies; Reuters reports Samsung has signed such contracts but did not disclose counterparty identities or terms. Bloomberg frames the situation as a structural shift in memory demand driven by AI compute growth rather than a transient consumer-cycle mismatch.

Market impact and prices

Reuters, CNBC, Bloomberg, and CBS consistently report that constrained memory supply is elevating prices. CBS cites analysts at Oxford Economics and quotes Bernard Yaros noting that the recent price rises have pushed computer costs upward by more than 3% per month in recent data. Bloomberg documents company-level warnings from multiple sectors - consumer electronics, autos, and data centers - that DRAM scarcity is already affecting production and margins.

What to watch

Editorial analysis: Observers should monitor three measurable indicators: reported HBM and DRAM shipment volumes and ASPs from major memory producers; the number and scale of multi-year contracts disclosed in earnings calls; and capital expenditure announcements for new fabs or capacity expansions. Also watch for hyperscaler procurement disclosures and any regulatory or subsidy moves aimed at onshoring semiconductor capacity, as such steps could change medium-term supply dynamics.

Implications for practitioners

Editorial analysis: For ML engineers and infrastructure teams, rising memory prices and constrained HBM availability could change hardware cost models for training and inference. Procurement timelines may lengthen, and total-cost-of-ownership estimates for on-prem clusters versus cloud alternatives may shift. For product teams building consumer hardware, elevated DRAM prices will affect BOM calculations and product launch timing.

Reported uncertainties

All sources note continued uncertainty about how long shortages will last. Reuters quotes Samsung forecasting the supply-to-demand gap could widen in 2027 based on current orders; CBS reports analysts expecting shortages to persist at least through the end of 2027. None of the coverage provides a definitive timeline for when capacity expansions will fully relieve the shortage.

Scoring Rationale

Widespread reporting shows a structural supply reallocation toward AI-grade memory, affecting hardware costs and procurement for ML teams and consumer-device makers. The story affects infrastructure planning and budgets across the industry, but it is not a frontier research or product launch.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems