Agentic AI Requires Orchestration Beyond Models

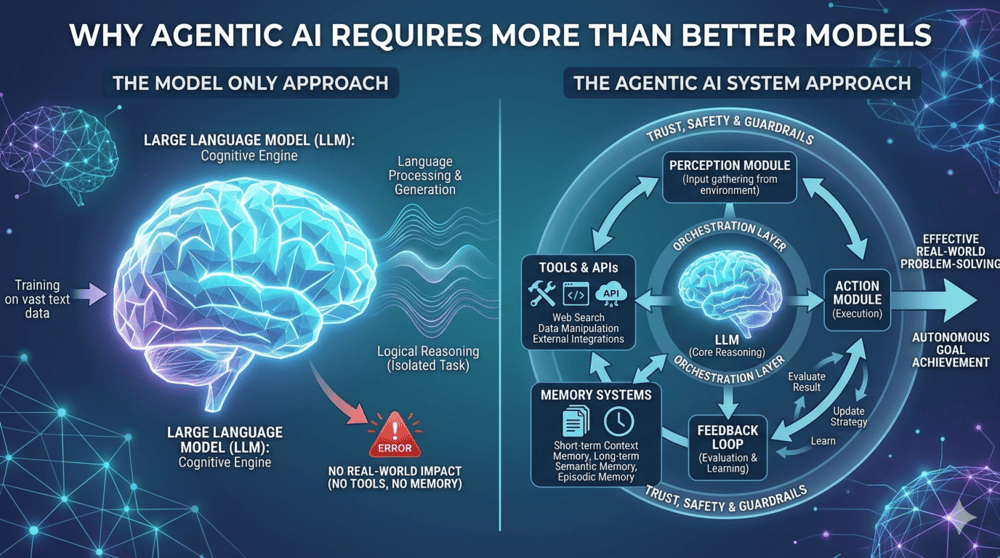

According to BigDataAnalyticsNews, agentic AI requires more than incremental model improvements and depends on memory, tool integrations, workflows, and orchestration infrastructure. The article frames the emergence of the Model Context Protocol (MCP) and the Agent-to-Agent (A2A) protocol as significant technical advances analogous to HTTP and REST, enabling shared context exchange and automated orchestration, per BigDataAnalyticsNews. It reports that deployments in regulated environments show agentic systems can lose coherent context mid-workflow, produce confidently incorrect outputs under ambiguity, and manifest distributed failure modes that smarter models alone do not eliminate. The piece argues that production-ready agentic systems need orchestration, governance frameworks, and process redesign rather than model-only improvements.

What happened

According to BigDataAnalyticsNews, agentic artificial intelligence is evolving from stateless, single-turn LLMs into systems that plan, create and use tools, correct errors, and pursue multistep goals autonomously. The article frames the emergence of the Model Context Protocol (MCP) and the Agent-to-Agent (A2A) protocol as technical advances analogous to HTTP and REST, providing shared mechanisms for interaction, context exchange, and orchestration.

Technical details

The piece describes agentic systems as combining four core capabilities: persistent memory, tool integrations, orchestration workflows, and execution infrastructure. BigDataAnalyticsNews reports that tool integrations that once required months of manual engineering can now be completed automatically using these new protocol primitives. The article also notes historical context, citing ChatGPT and the post-2022 LLM era as the starting point for widely deployed conversational models.

Editorial analysis - technical context

Industry observers note that building autonomous agents requires joining model capabilities with state management, deterministic orchestration, and secure tool interfaces. Companies building similar systems often invest in reliable state stores, provenance logging, transaction semantics for tool calls, and layered access controls to reduce undetected, distributed failures.

Context and significance

Editorial analysis: BigDataAnalyticsNews emphasizes operational risk in regulated environments, reporting agentic deployments can lose coherent context mid-workflow and produce confidently incorrect outputs under ambiguity. For practitioners, this shifts priority from pure model improvements to system engineering: observability, rollback, governance, and workflow redesign become central to trustworthy production behavior.

What to watch

Editorial analysis: Observers should track adoption of MCP and A2A implementations, standardization around context serialization and capability negotiation, and tool-safety patterns such as sandboxing and policy-enforced tool access. Metrics to monitor include context continuity, tool-call success rates, and end-to-end provenance for multi-agent workflows.

Scoring Rationale

The story highlights a practical shift practitioners face when moving beyond LLMs to autonomous agent systems. It is important for production engineering, governance, and tool integration, but not a frontier-model breakthrough.

Practice interview problems based on real data

1,500+ SQL & Python problems across 15 industry datasets — the exact type of data you work with.

Try 250 free problems