Advertisers Reframe Safety Around AI-Powered Ecosystem

Advertisers and political actors are shifting the brand-safety conversation toward a broader 'AI ad safety' problem as ad dollars flow into AI platforms and political ad buys ahead of the midterm elections. Millions are being spent on campaigns that shape AI regulation, while marketers confront new risks: AI-generated “slop” sites, targeted chatbot ads that raise privacy and safety flags, and the inadequacy of legacy blocklists. Tech firms are simultaneously deploying AI to detect invalid traffic and surface risky placements, but the ecosystem lacks consistent standards or guardrails. For practitioners in ad tech, marketing analytics, and platform safety, the immediate task is operational: update detection signals, instrument controls for generative placements, and engage the regulatory debate shaping permissible ad behavior.

What happened

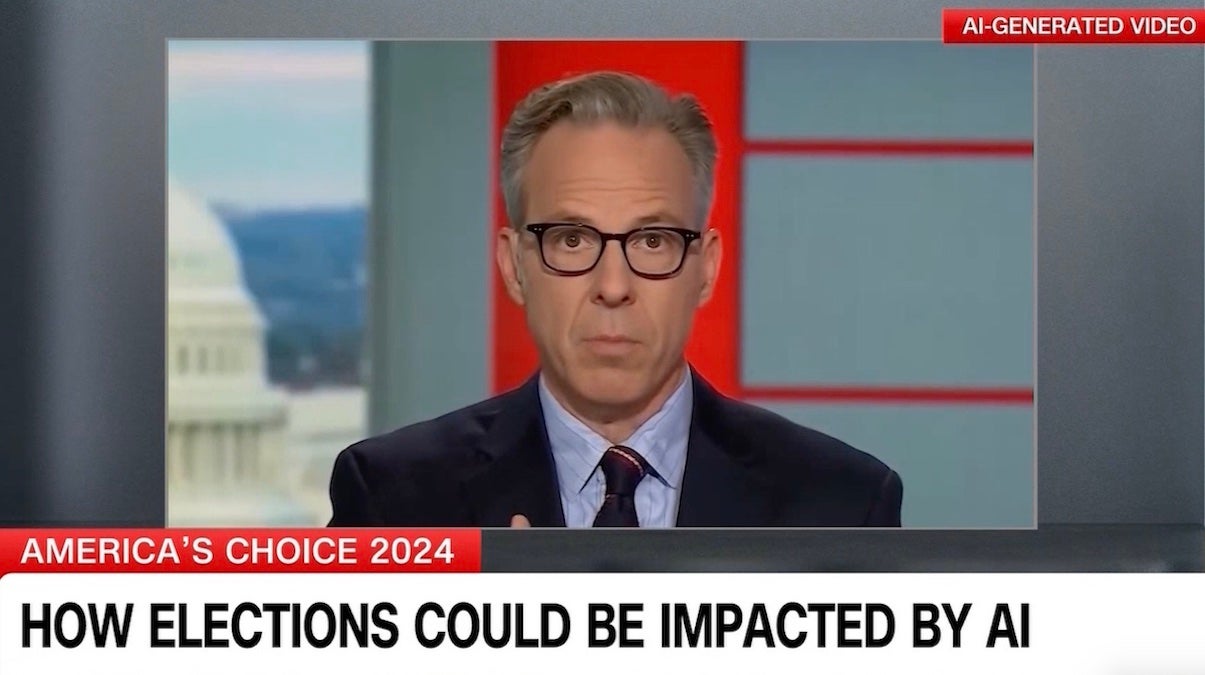

The advertising industry’s traditional brand-safety playbook is colliding with a rapidly changing AI ad ecosystem. Political groups and industry-backed PACs are spending millions on ad campaigns to influence AI policy ahead of the midterm elections, turning regulation into a messaging battleground. At the same time, marketers face new operational threats from generative-AI-driven inventory—automatically produced “slop” sites, conversational ad insertions inside chatbots, and novel invalid-traffic patterns.

Technical context

Brand safety systems were built to filter known categories (adult content, hate speech, disinformation, fraud) using blocklists, contextual classifiers, and publisher reputations. Generative AI changes the signal set: content can be created at scale, mimic trusted formats, or appear inside interactive agents that blend conversational output and ad insertion. That raises three technical problems: detection (how to identify AI-generated low-quality inventory), attribution (how to trace ad calls through conversational or programmatic flows), and policy enforcement (how to encode new safety rules into DSPs, SSPs, and verification vendors).

Key details from sources

The Hill documents the surge of political ad spending tied to AI-regulation messaging and cites industry analysts warning about the stakes for public policy. Industry coverage flags concrete examples: a high-profile dispute involving Anthropic served as a market warning, and lawmakers—Senator Ed Markey among them—have flagged privacy and safety concerns about chatbot ads. Vendors (and platform owners like Google) are using AI to combat invalid traffic, while verification firms and publishers call out AI-generated low-quality sites that siphon ad budgets.

Why practitioners should care

This is not a PR problem alone; it's an operational and modeling problem. Detection models trained on pre-AI patterns will miss new, synthetic inventory and conversational ad placements. Programmatic pipelines need provenance signals, stronger creative verification, and real-time quality scoring that incorporate generative-content detectors and conversational context. Meanwhile, regulatory outcomes from the midterms could mandate new disclosure, targeting or safety requirements, affecting ad product design and compliance workstreams.

What to watch

Expect rapid evolution in three areas:

- •verification tech that fuses generative-content classifiers with traffic-fraud detectors

- •platform-level policies governing chatbot ad insertion and monetization

- •regulatory proposals emerging from midterm-driven campaigns that could codify transparency, labeling, or limits on AI-driven ad personalization

Practitioners should prioritize detection-signal refreshes, pipeline provenance, and cross-functional engagement with policy teams.

Scoring Rationale

The story affects ad-tech operators, marketers, and platform engineers: it combines near-term regulatory pressure with operational risks from generative AI. It's important for practitioners designing detection and compliance systems, but not a foundational AI-model breakthrough.

Practice with real Ad Tech data

90 SQL & Python problems · 15 industry datasets

250 free problems · No credit card

See all Ad Tech problems